While you search on the web, there’s an excellent likelihood LLMs are concerned someplace within the course of.

If you would like any likelihood of visibility in LLM search, you must perceive easy methods to make your model seen in AI solutions.

The most recent wave of specialists declare to know the “secret” to AI visibility, however the actuality is we’re all nonetheless figuring it out as we go.

Here’s what we do know to date, based mostly on ongoing analysis and experimentation.

LLM search refers to how massive language fashions collect and ship data to customers—whether or not that’s through Google’s AI Overviews, ChatGPT, or Perplexity.

The place serps hand you a listing of choices, an LLM goes straight to producing a pure language response.

Generally that response is predicated on what the mannequin already is aware of, different instances it leans on exterior sources of data like up-to-date internet outcomes.

That second case is what we name LLM search—when the mannequin actively fetches new data, usually from cached internet pages or reside search indices, utilizing a course of often called retrieval-augmented technology (RAG).

Like conventional search, LLM search is turning into an ecosystem in its personal proper—solely the tip purpose is somewhat completely different.

Conventional search was about rating internet pages larger in search outcomes.

LLM search is about making certain that your model and content material are discoverable and extractable in AI-generated solutions.

| Attribute | Conventional search | LLM search |

|---|---|---|

| Primary purpose | Assist folks discover probably the most related internet pages. | Give folks a straight reply in pure language, backed by related sources. |

| Solutions you get | A listing of hyperlinks, snippets, advertisements, and generally panels with fast info. | A written response, usually with brief explanations or just a few cited/talked about sources. |

| The place solutions come from | A continually up to date index of the internet. | A mixture of the mannequin’s coaching knowledge and data retrieved from serps. |

| How contemporary it is | Very contemporary—new pages are crawled and listed all of the time. | Not as contemporary—Retrieves cached variations of internet pages, however largely present. |

| Question composition | Quick-tail, intent-ambiguous key phrase queries. | Conversational, ultra-long-tail queries. |

| What occurs to site visitors | Pushes customers towards web sites, producing clicks. | Intent usually met inside the reply, which means fewer clicks. |

| Methods to affect | search engine optimization finest practices: key phrases, backlinks, website velocity, structured knowledge, and so on. | Being a trusted supply the mannequin would possibly cite: mentions and hyperlinks from authority websites, contemporary, well-structured, and accessible content material, and so on. |

AI firms don’t reveal how LLMs choose sources, so it’s laborious to know easy methods to affect their outputs.

Right here’s what we’ve discovered about LLM optimization to date, based mostly on major and third-party LLM search research.

We studied 75,000 manufacturers throughout thousands and thousands of AI Overviews, and located that branded internet mentions correlated most strongly with model mentions in AI Overviews.

Extra model mentions imply extra coaching examples for a LLM to be taught from.

The LLM successfully “sees” these manufacturers extra throughout coaching, and may higher affiliate them with related subjects.

However that doesn’t imply you must go chasing mentions for mentions’ sake. Focus, as an alternative, on constructing a model price mentioning.

High quality issues greater than quantity.

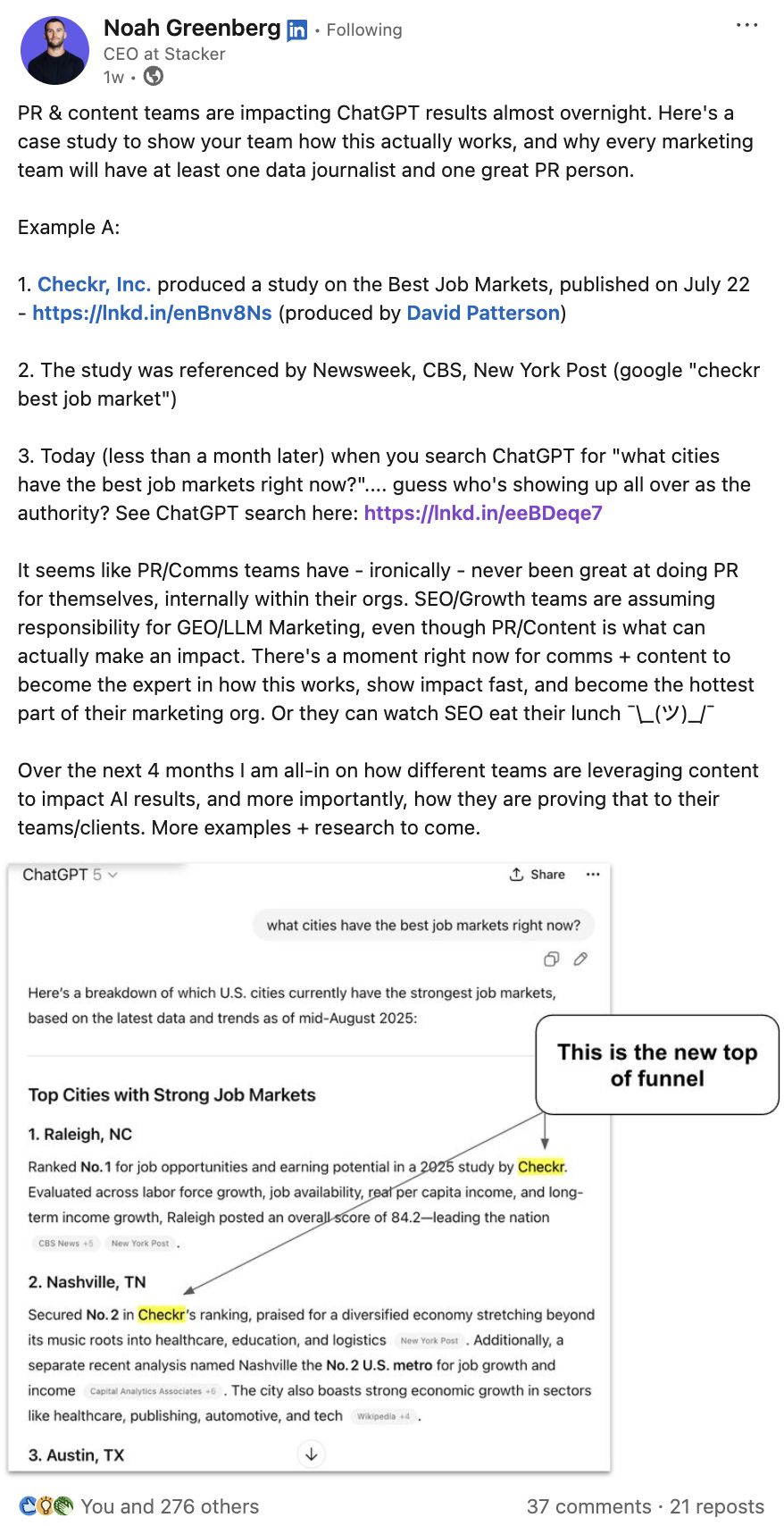

Right here’s proof. Checkr, Inc did a examine on the most effective job markets, which received picked up by not more than a handful of authoritative publications, together with Newsweek and CNBC.

But, throughout the month, Checkr was being talked about constantly in related AI conversations.

I verified this throughout completely different ChatGPT profiles to account for personalization variance, and Checkr was talked about each time.

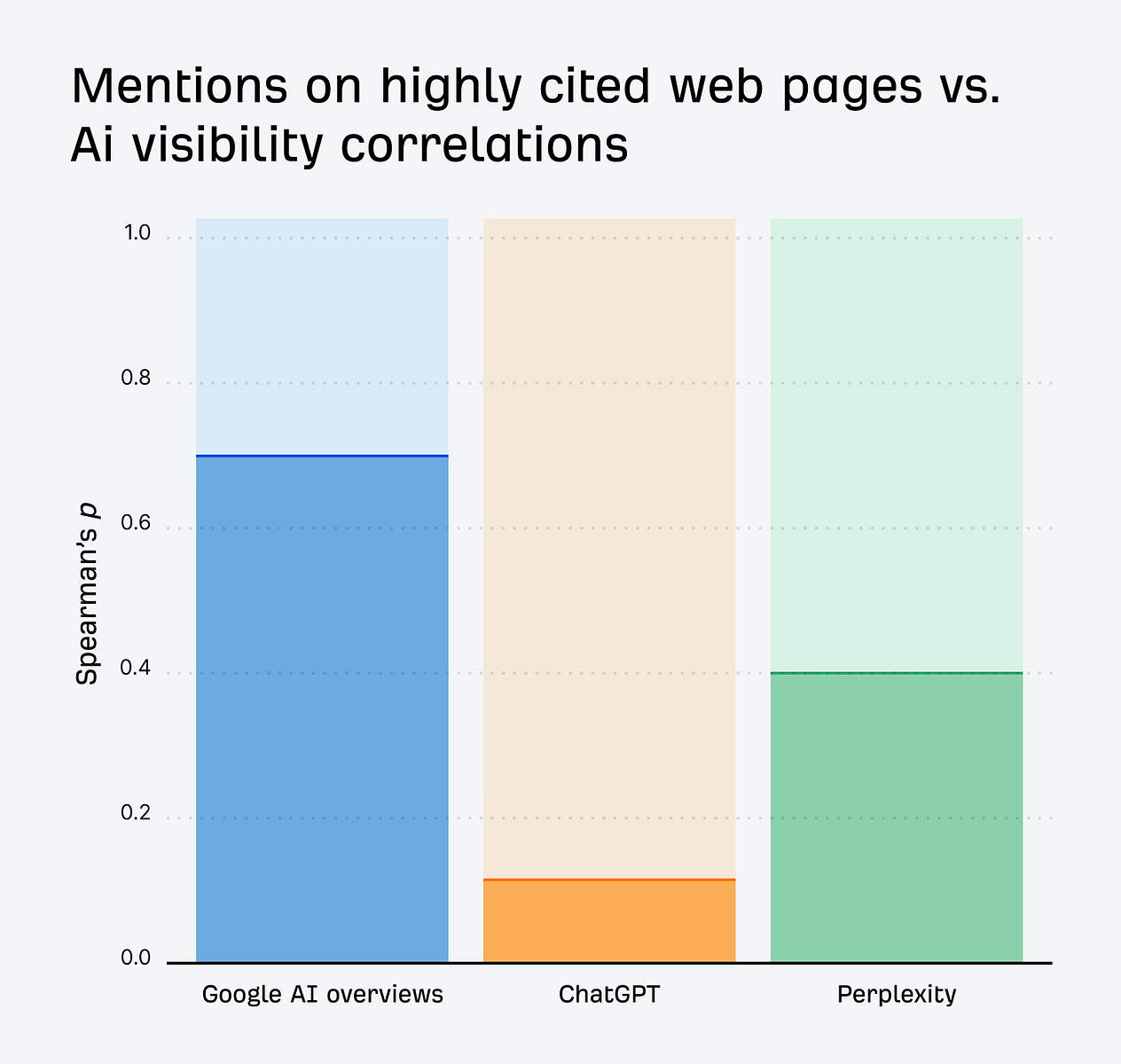

In response to analysis by Ahrefs’ Product Advisor, Patrick Stox, securing placements on pages with excessive authority or excessive site visitors will compound your AI visibility.

Mentions in Google’s AI Overviews correlate strongly with model mentions on heavily-linked pages (ρ ~0.70)—and we see an identical impact for manufacturers exhibiting up on high-traffic pages (ρ ~0.55).

It’s solely a matter of time earlier than AI assistants start assessing qualitative dimensions like sentiment.

When that occurs, constructive associations and lasting authority will turn out to be the true differentiators in LLM search.

Concentrate on constructing high quality consciousness via:

PR & content material partnerships

For sustained AI visibility, collaborate with trusted sources and types. It will provide help to construct these high quality associations.

At Ahrefs it’s no secret that we—like many—try to spice up our authority round AI subjects.

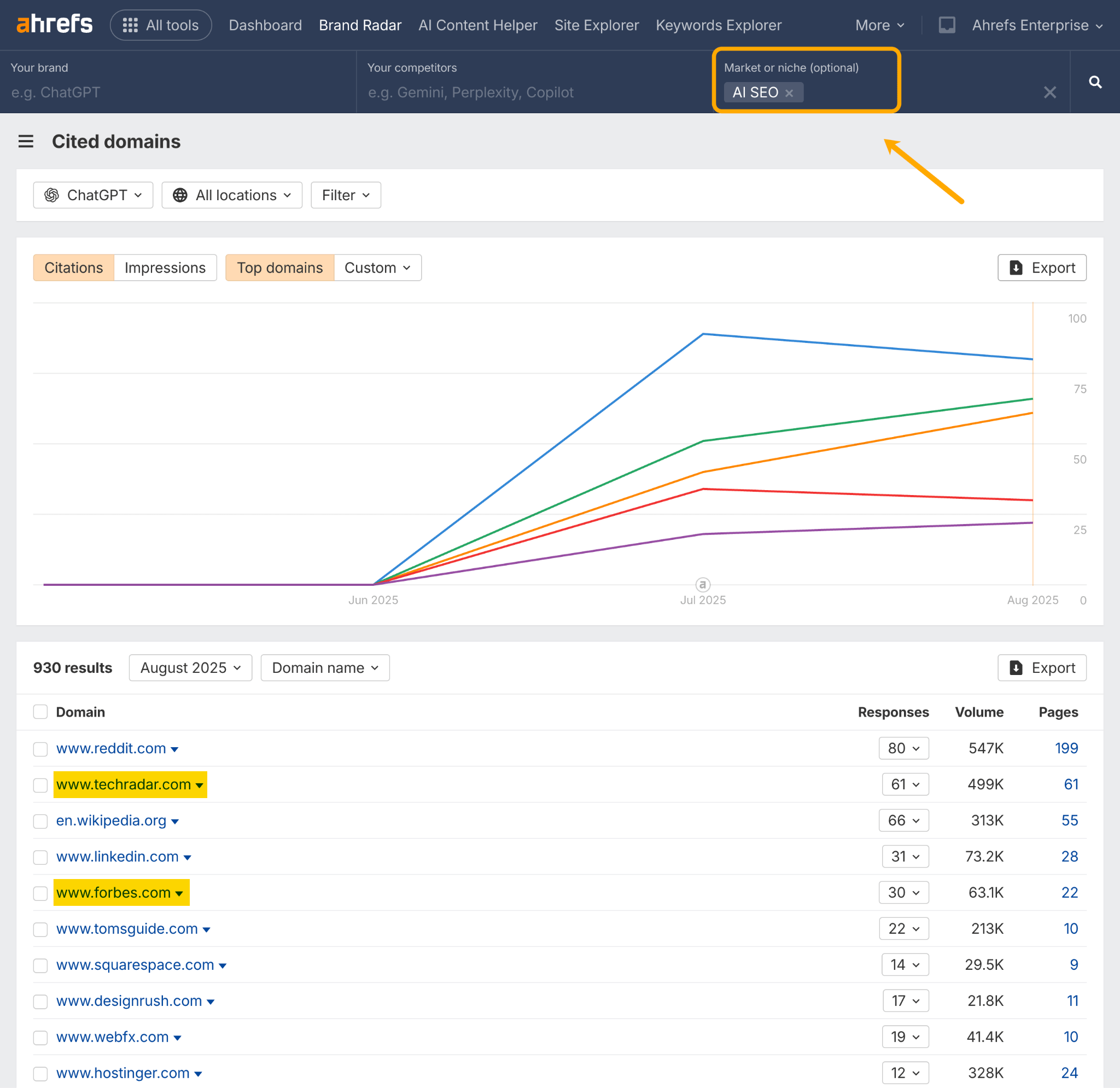

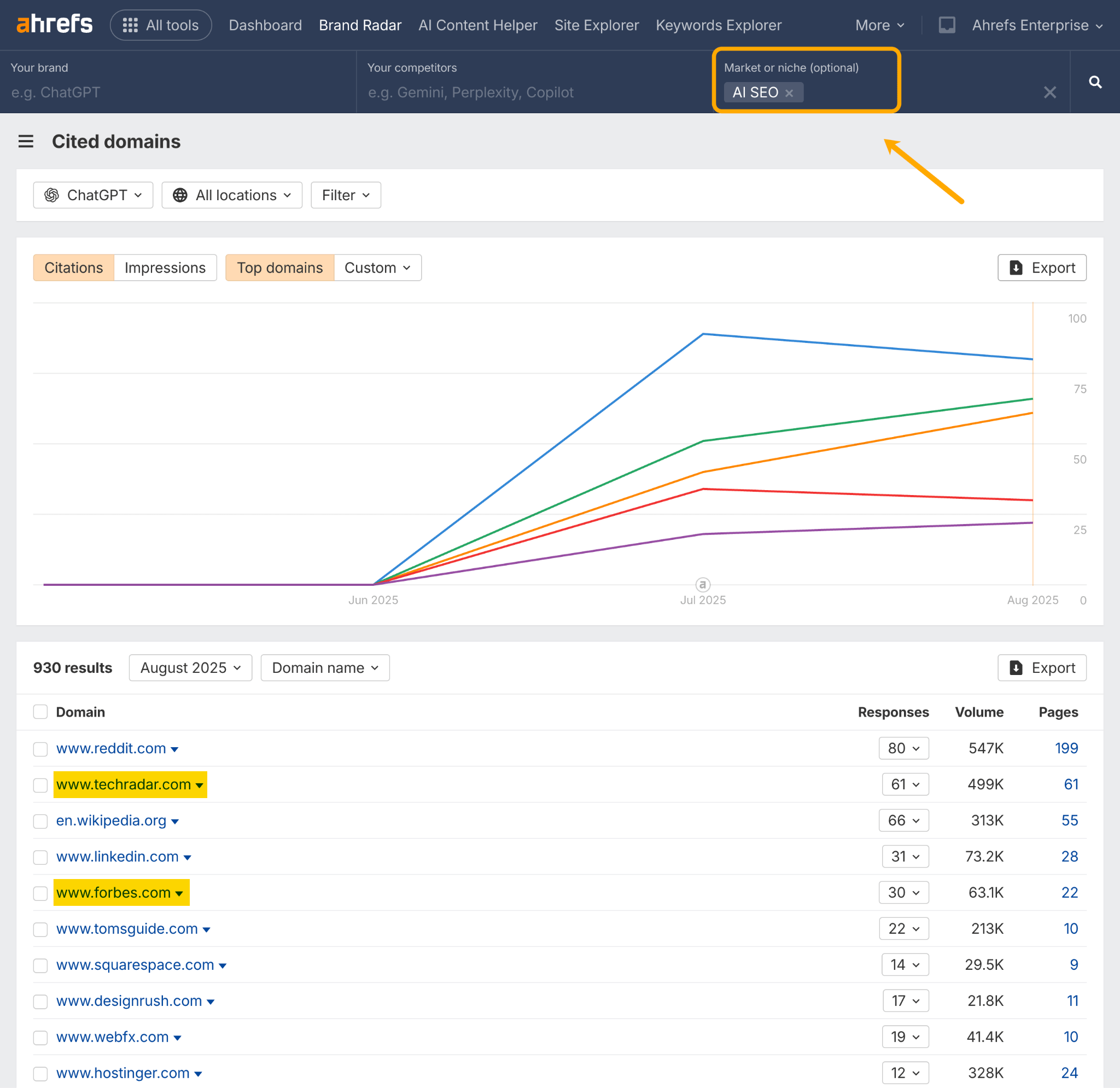

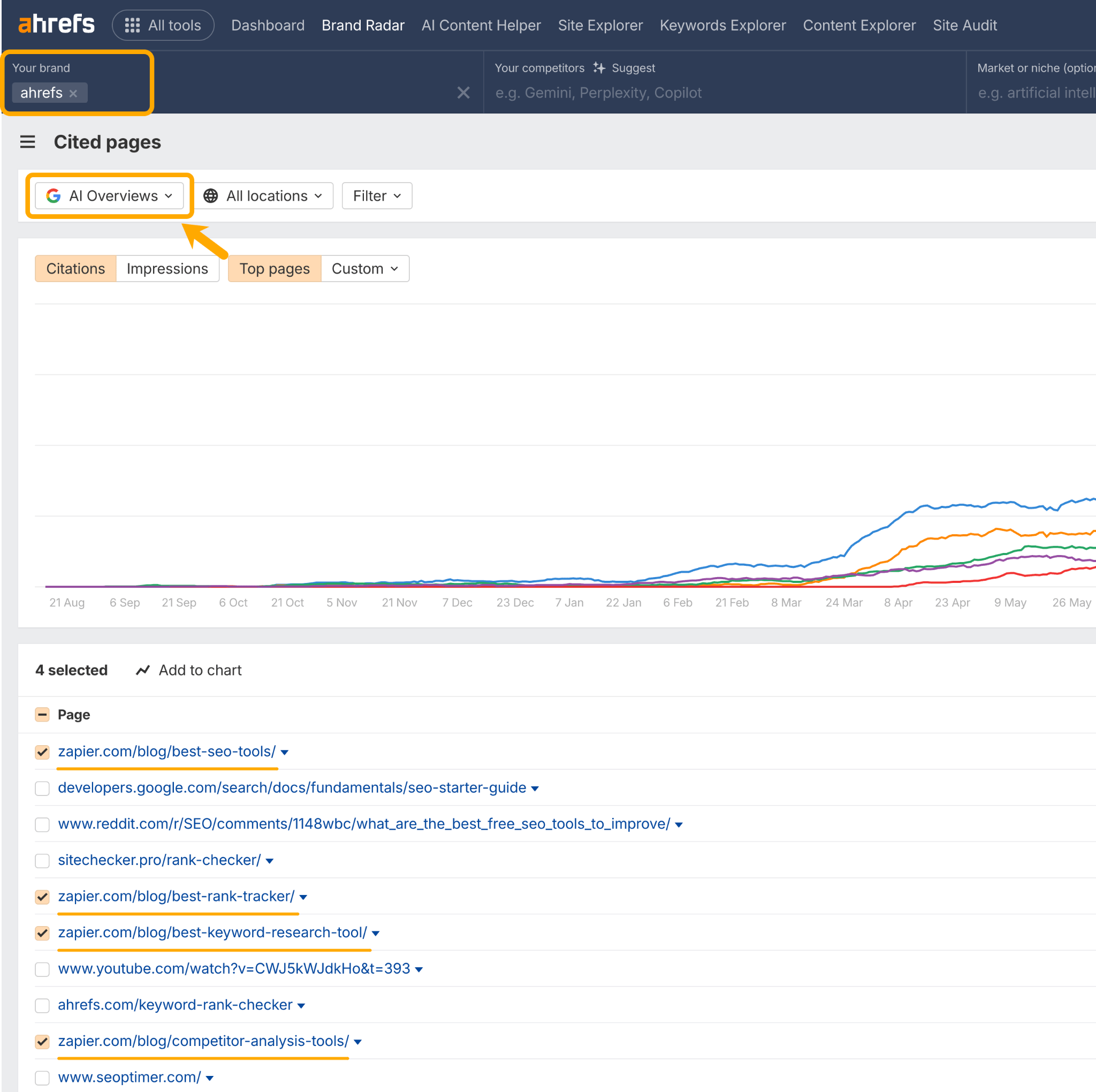

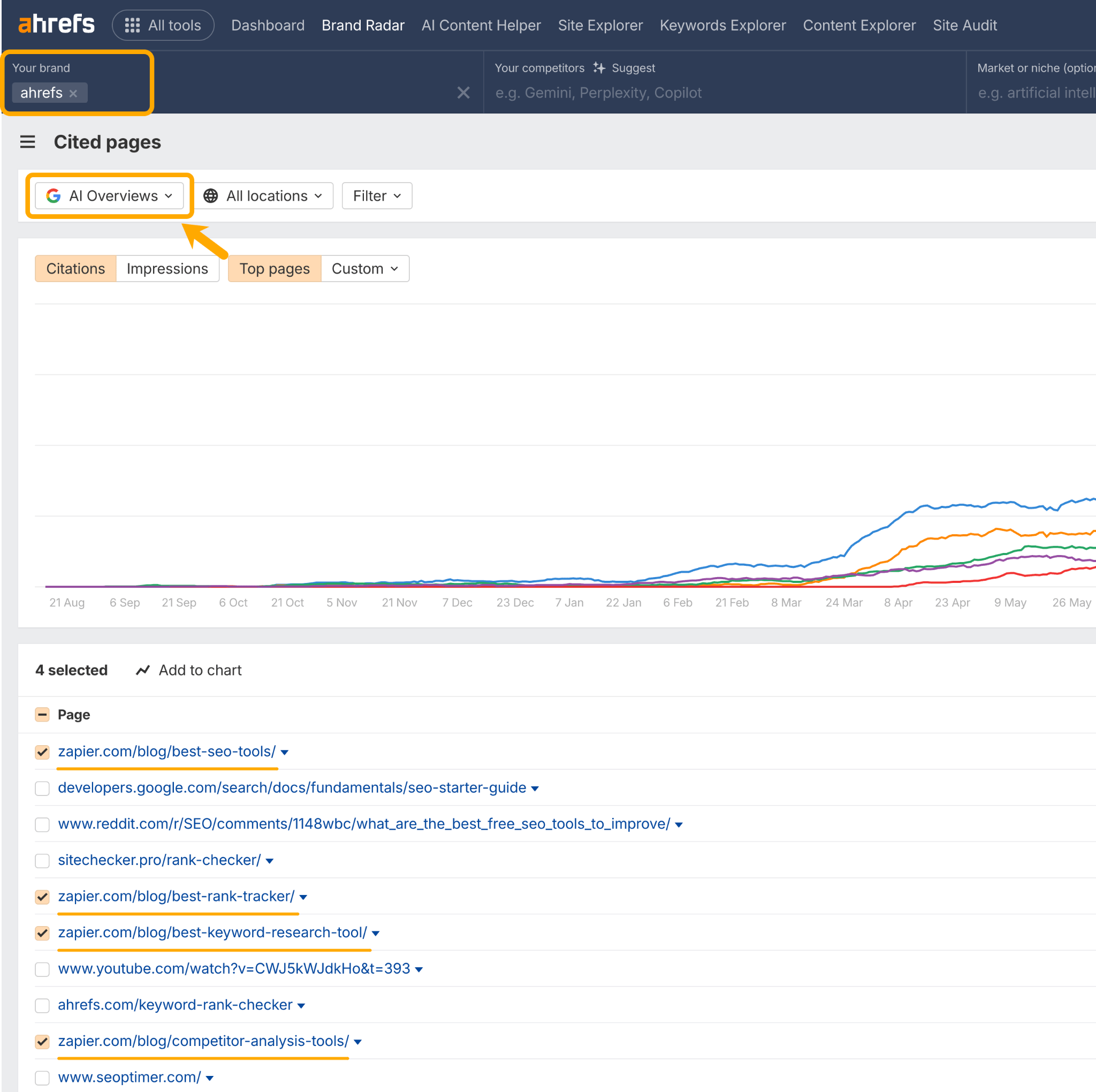

To search out collaboration alternatives, we will head to Ahrefs Model Radar and use the Cited Domains report.

On this instance, I’ve set my area of interest to “AI search engine optimization”, and am trying on the most cited domains in ChatGPT.

There are two authoritative publications that will simply be open to a PR pitch: Tech Radar and Forbes.

You’ll be able to repeat this evaluation in your personal market. See which internet sites present up constantly throughout a number of niches, and develop ongoing collaborations with probably the most seen ones.

Evaluations and community-building

To construct constructive mentions, encourage real dialogue and consumer word-of-mouth.

We do that continually at Ahrefs. Our CMO, Tim Soulo, places name outs for suggestions throughout social media. Our Product Advisor, Patrick Stox, contributes frequently to Reddit discussions. And we level all our customers to our buyer suggestions website the place they’ll focus on, request, and upvote options.

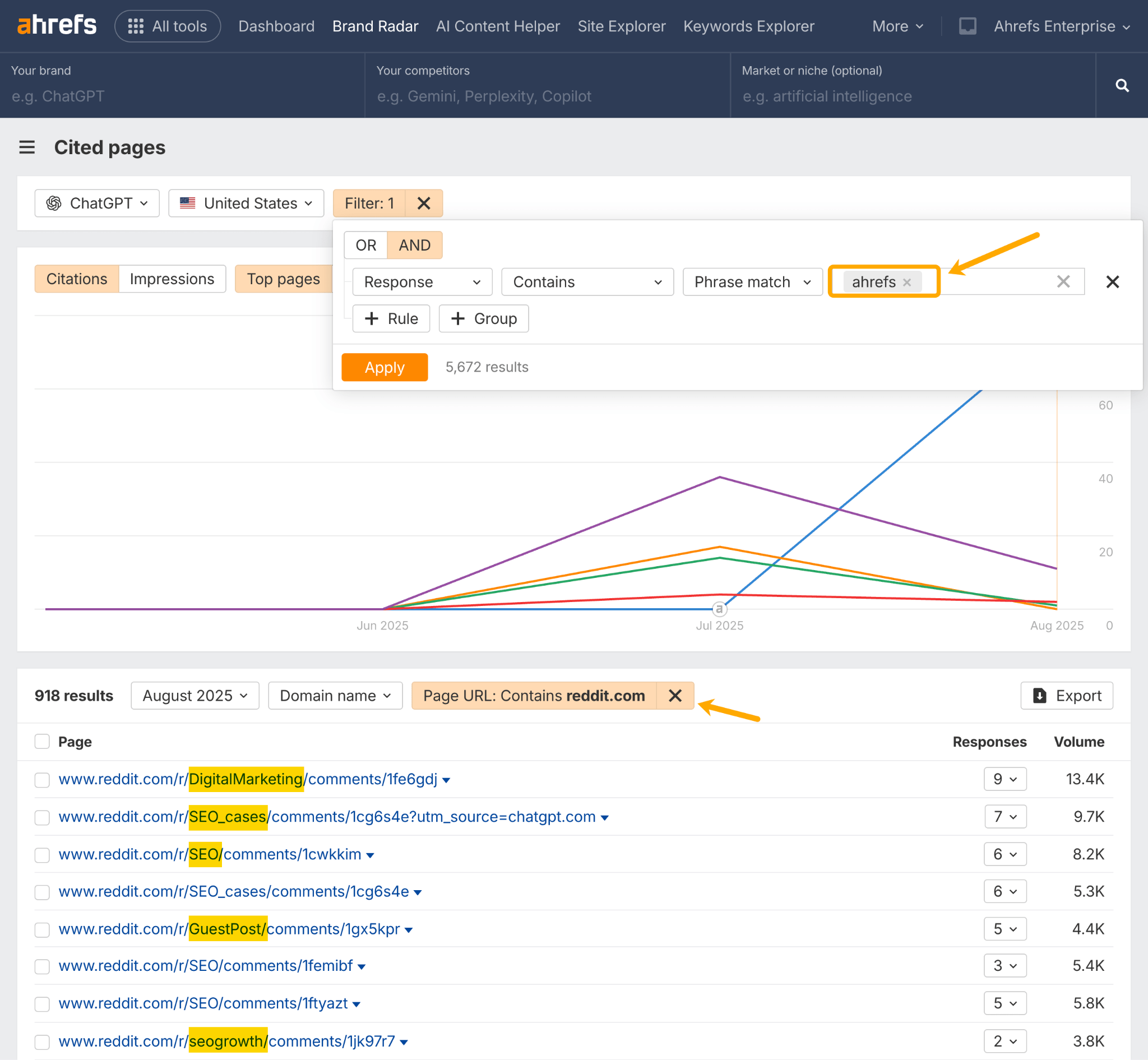

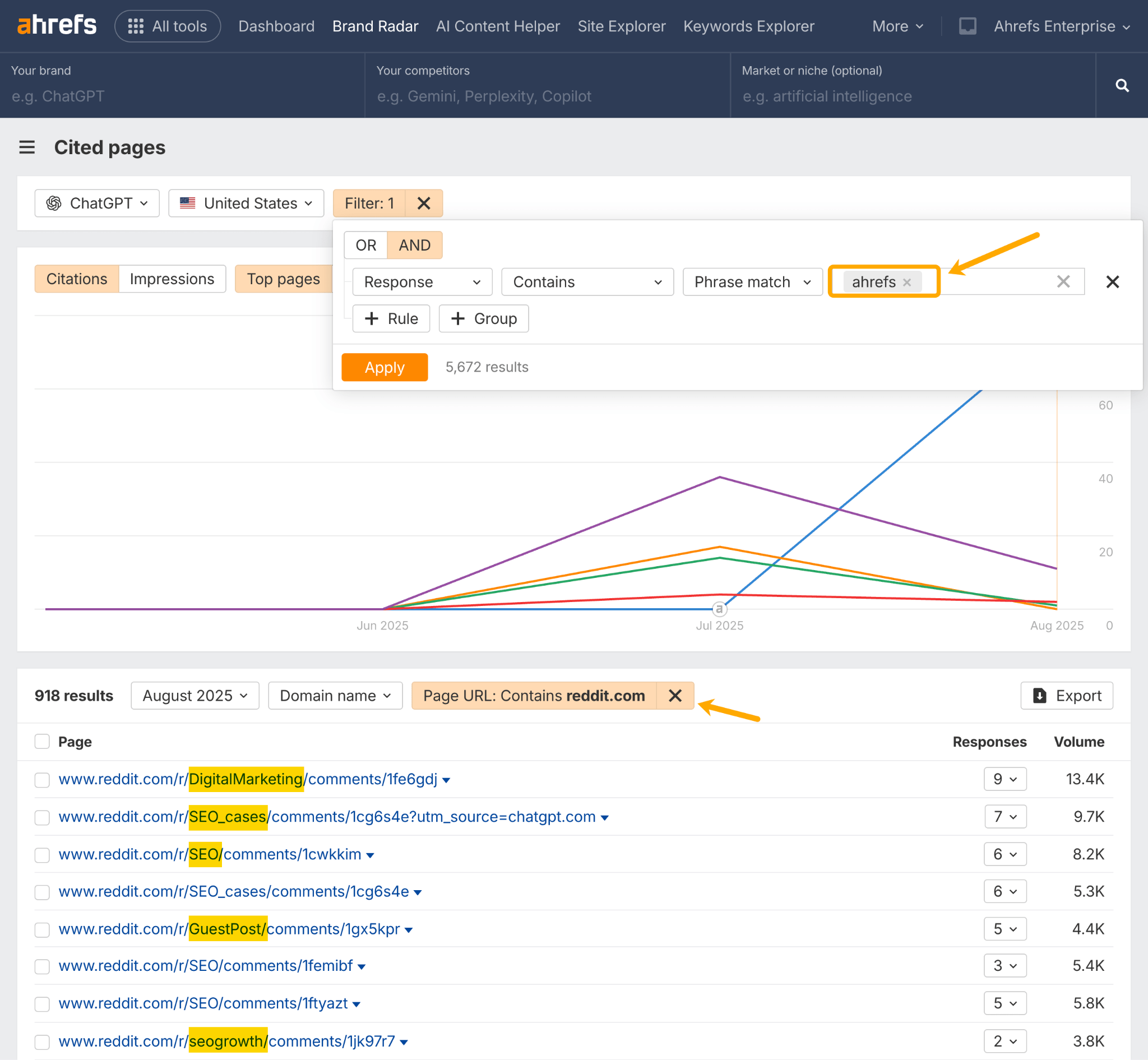

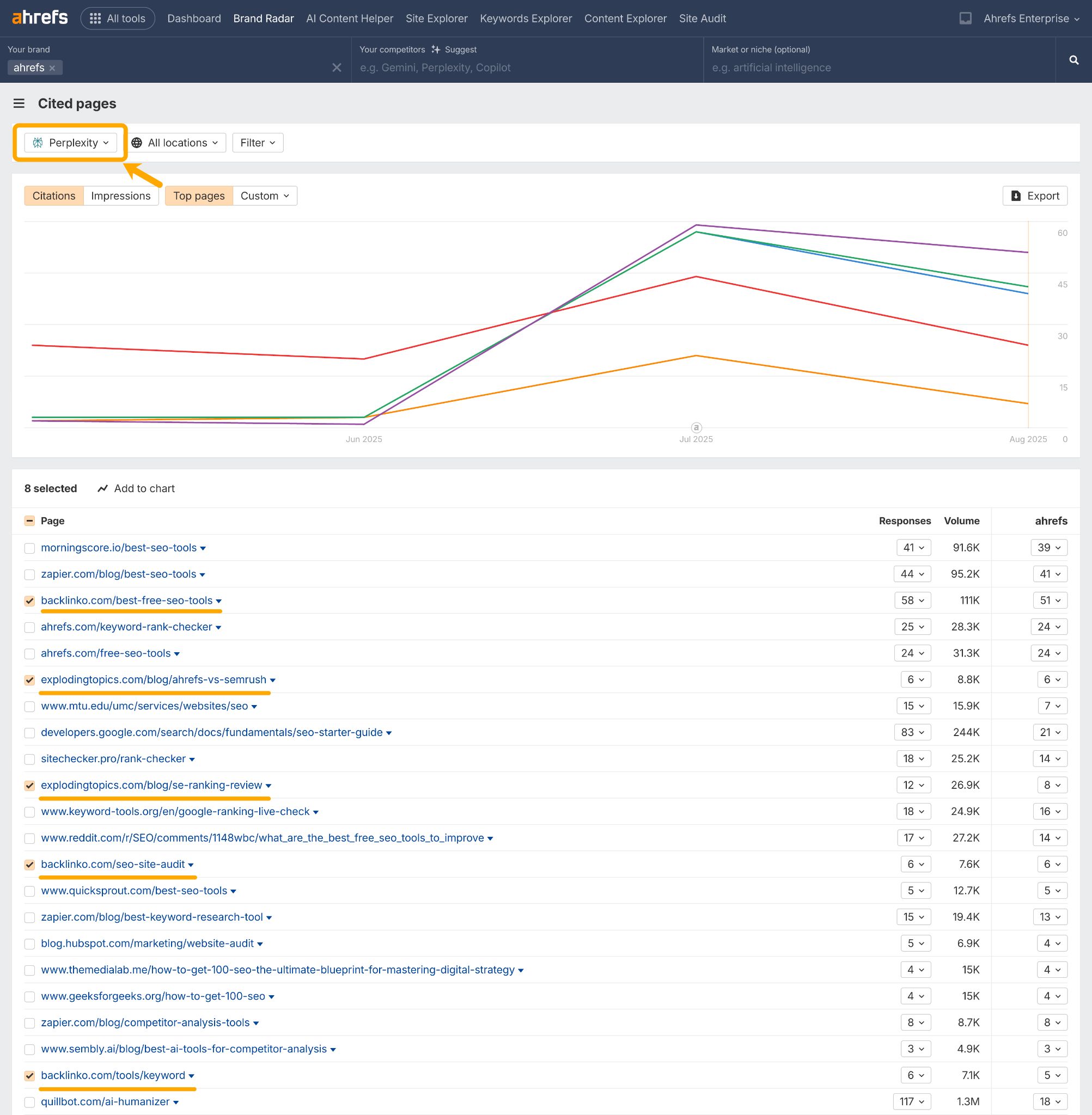

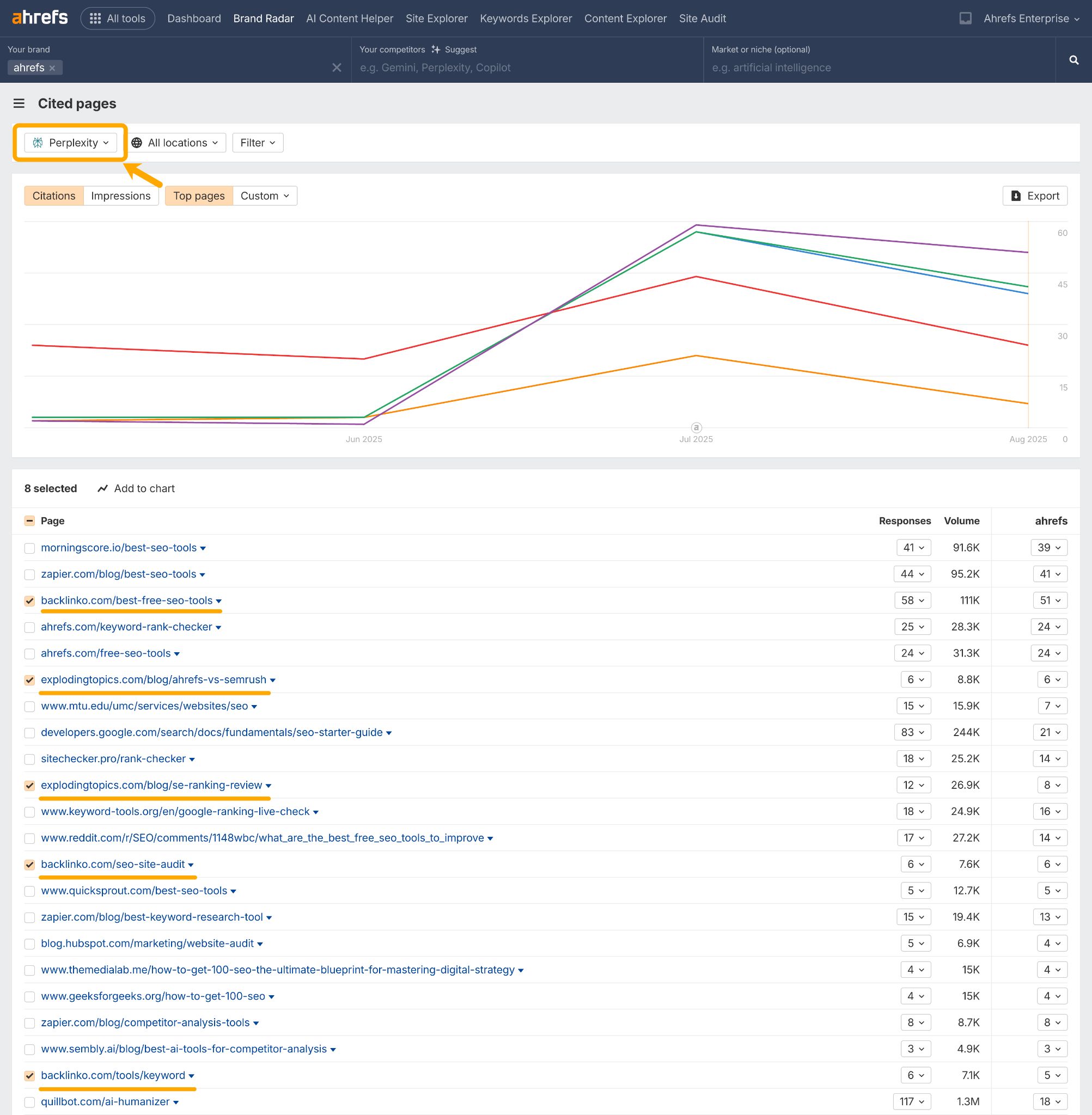

You need to use Ahrefs Model Radar to get began with your personal group technique. Head to the Cited Pages report, enter your area, and verify which UGC discussions are exhibiting up in AI associated to your model.

On this instance, I’ve taken word of the subreddits that frequently point out Ahrefs.

One tack we might take right here is to construct an even bigger presence in these communities.

My colleague, SQ, wrote a nice information on easy methods to present up authentically on Reddit as a model. It’s a few years previous now, however all the recommendation nonetheless rings true. I like to recommend studying it!

Model messaging

While you get your messaging proper, you give folks the fitting language to explain your model—which creates extra consciousness.

The extra the message will get repeated, the extra space it takes up in a buyer’s thoughts, and in LLM search.

This provides you a better “share of reminiscence”.

You’ll be able to gauge the affect of your model messaging by monitoring your co-mentions.

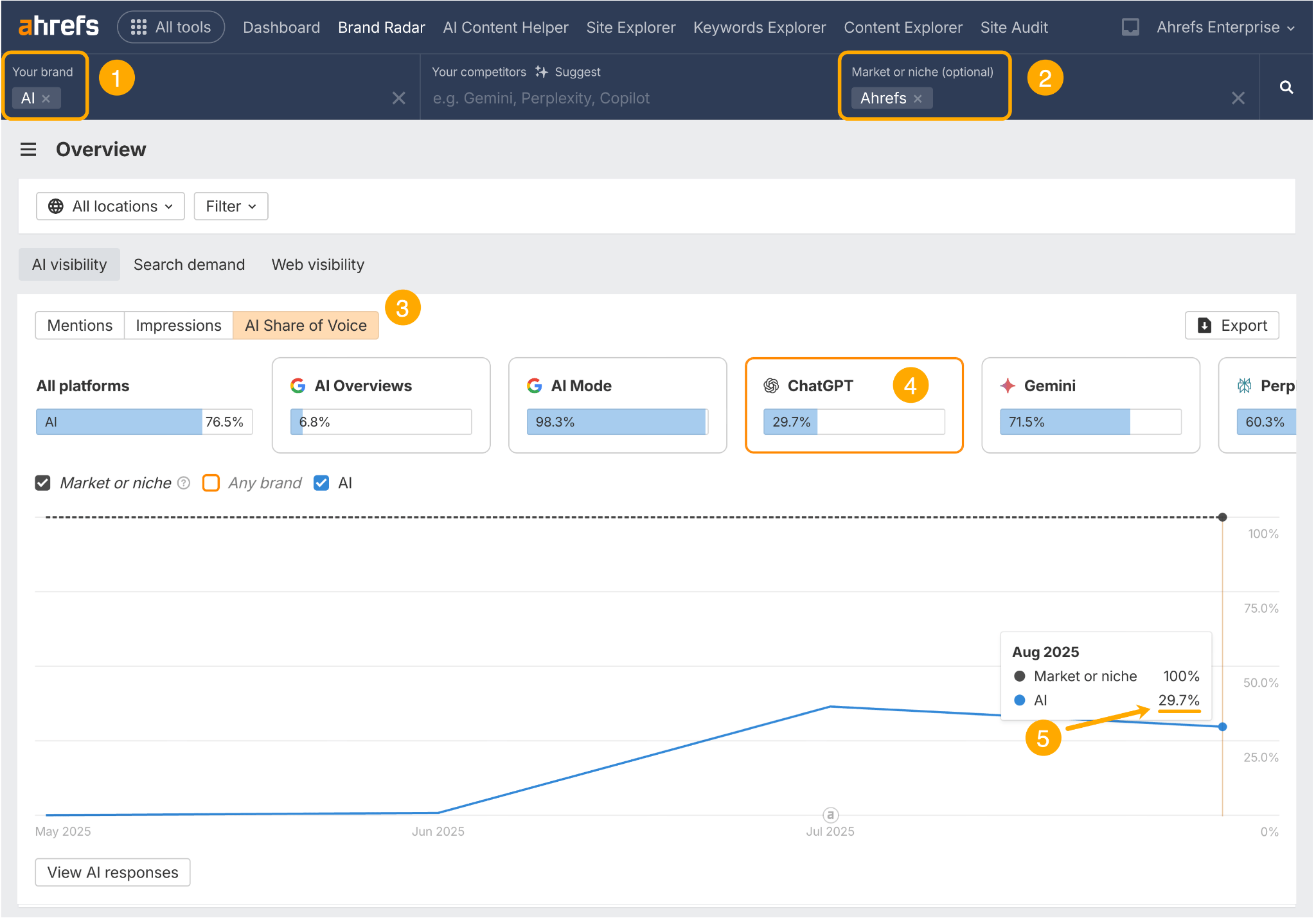

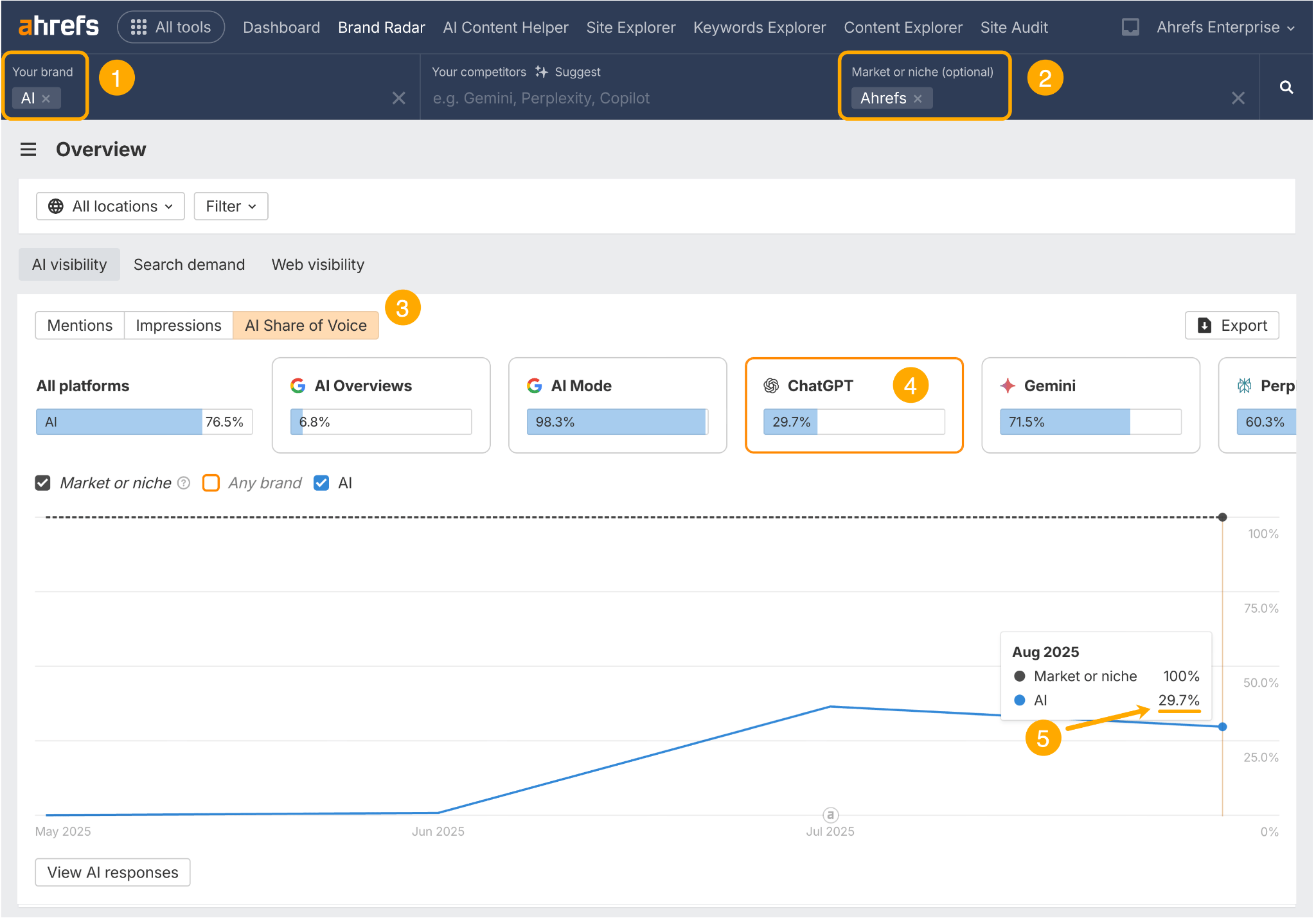

Head to the principle dashboard of Ahrefs Model Radar. Then:

- Add your co-mention subject within the “model” area

- Add your model title within the “market or area of interest” area

- Head to the AI Share of Voice report

- Choose the AI platform you need to analyze

- Observe your co-mention share over time

This reveals me that 29.7% of “Ahrefs” mentions in ChatGPT additionally point out the subject of AI.

If we need to dominate AI conversations in LLM search—which, by the way, we do—we will monitor this share over time to know model alignment, and see which ways transfer the needle.

*

With regards to boosting model consciousness, relevance is key.

You need your off-site content material to align along with your product and story.

The extra related mentions are to your model, the extra doubtless folks can be to proceed to say, search, and cite it.

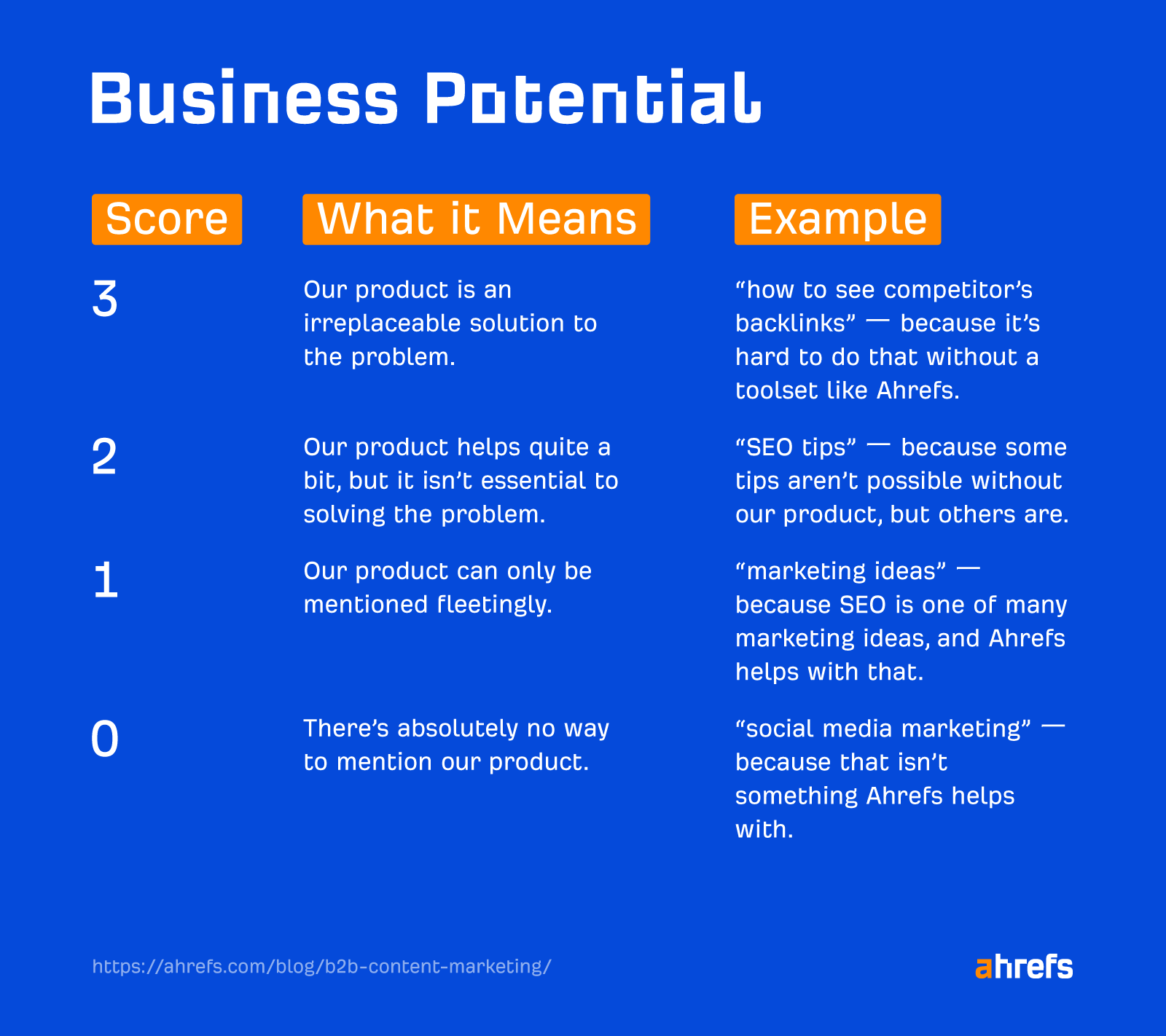

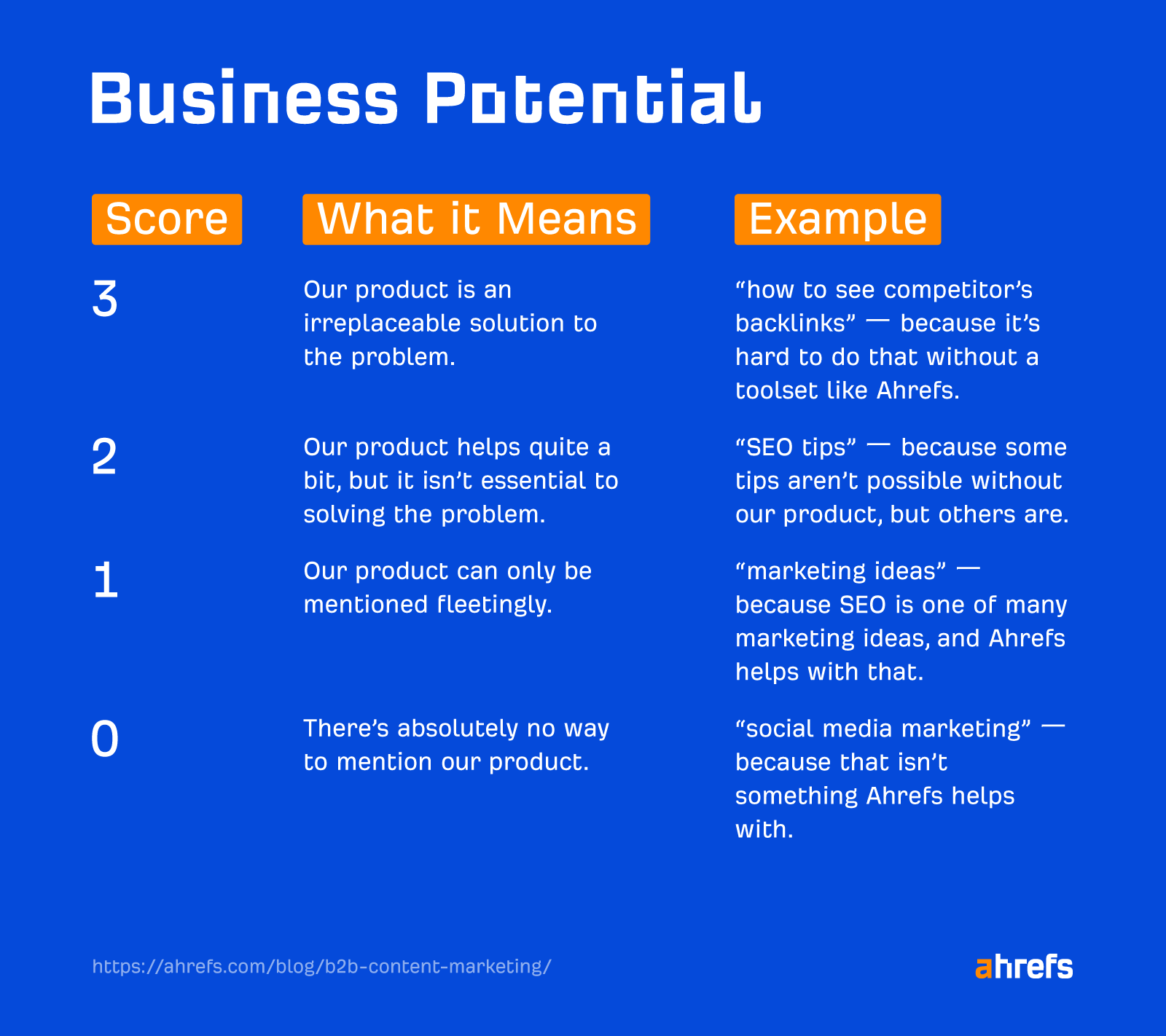

I consider it by way of our Enterprise Potential matrix. We goal to write down about subjects that rating “3” on the Enterprise Potential scale—these are those that may’t be mentioned with out mentioning Ahrefs.

With regards to LLM search, your MO must be protecting excessive Enterprise Potential subjects to create a suggestions loop of internet mentions and AI visibility.

Numerous recommendation has been flying round about structuring content material for AI and LLM search—not all of it substantiated.

Personally, I’ve been cautious in giving recommendation on this subject, as a result of it’s not one thing we’ve had an opportunity to check but.

Which is why Dan Petrovic’s current article on how Chrome and Google’s AI deal with embedding was such a welcome addition to the dialog.

Right here’s what we took from it.

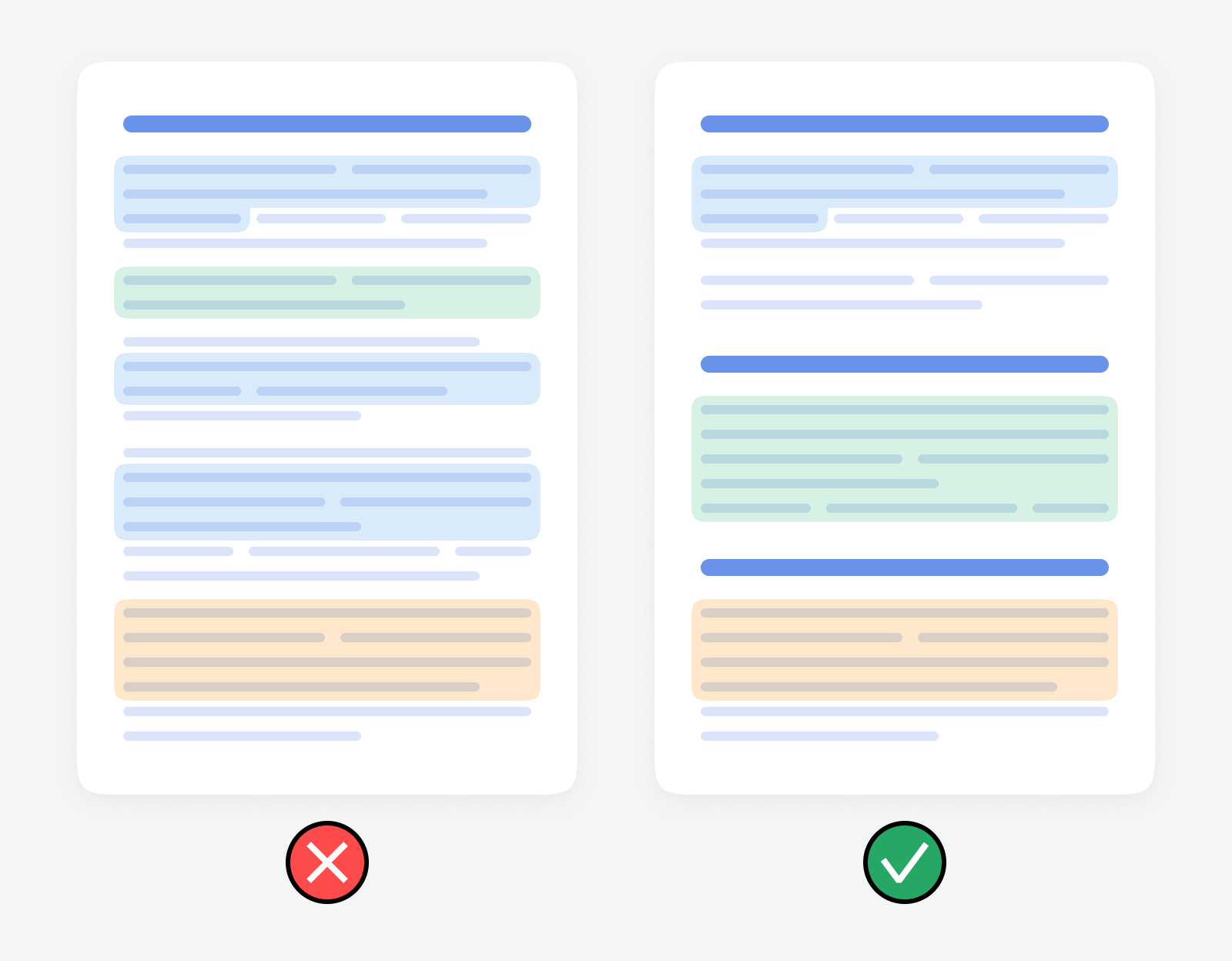

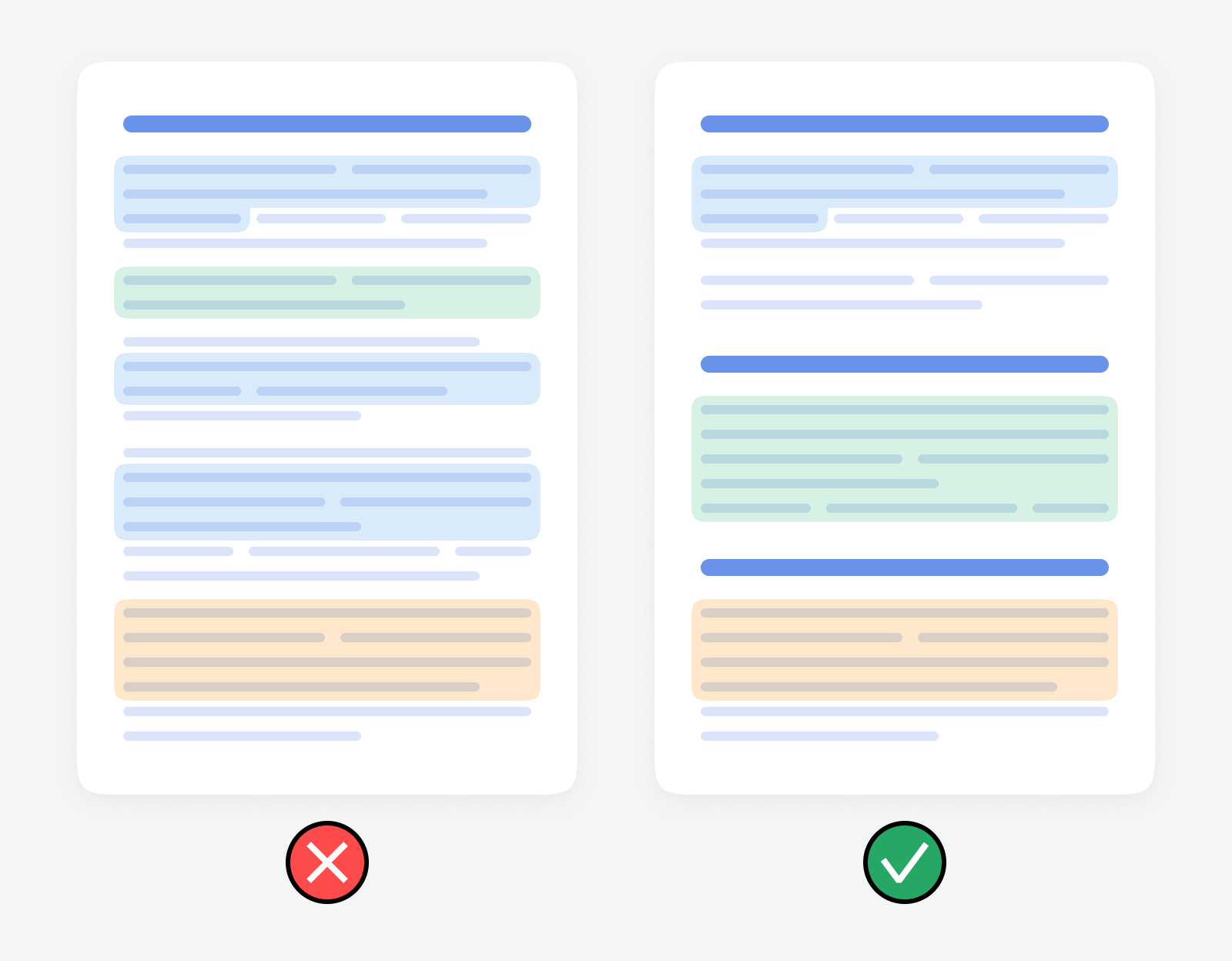

Write “BLUF” content material—Backside Line Up Entrance

Chrome solely ever considers the primary 30 passages of a web page for embeddings.

Meaning you must be sure your most vital content material seems early. Don’t waste invaluable passage slots on boilerplate, fluff, or weak intros.

Additionally, a really lengthy article received’t preserve producing countless passages—there’s a ceiling.

If you would like protection throughout a number of subtopics, create separate centered articles fairly than one huge piece that dangers being reduce off midstream.

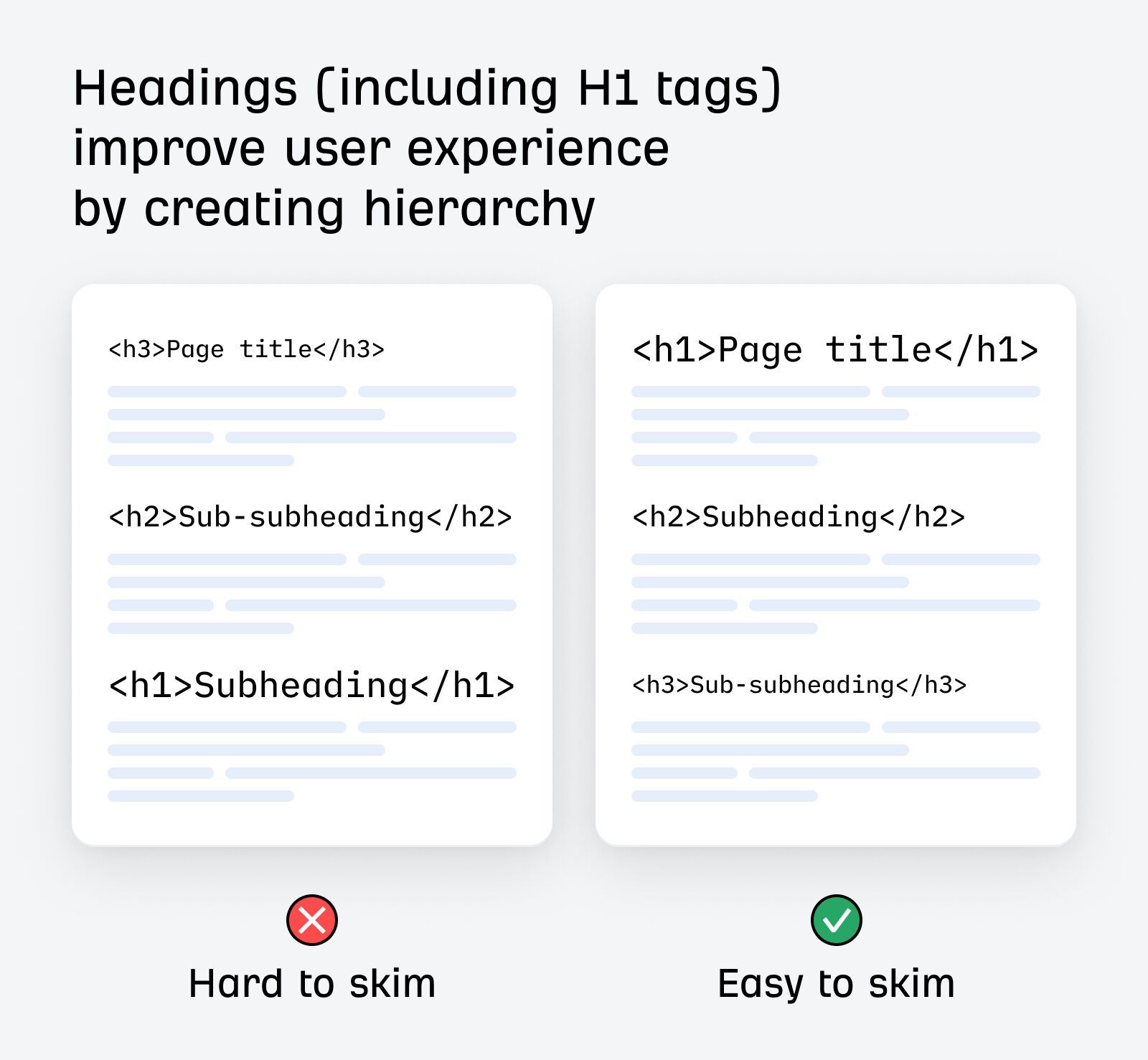

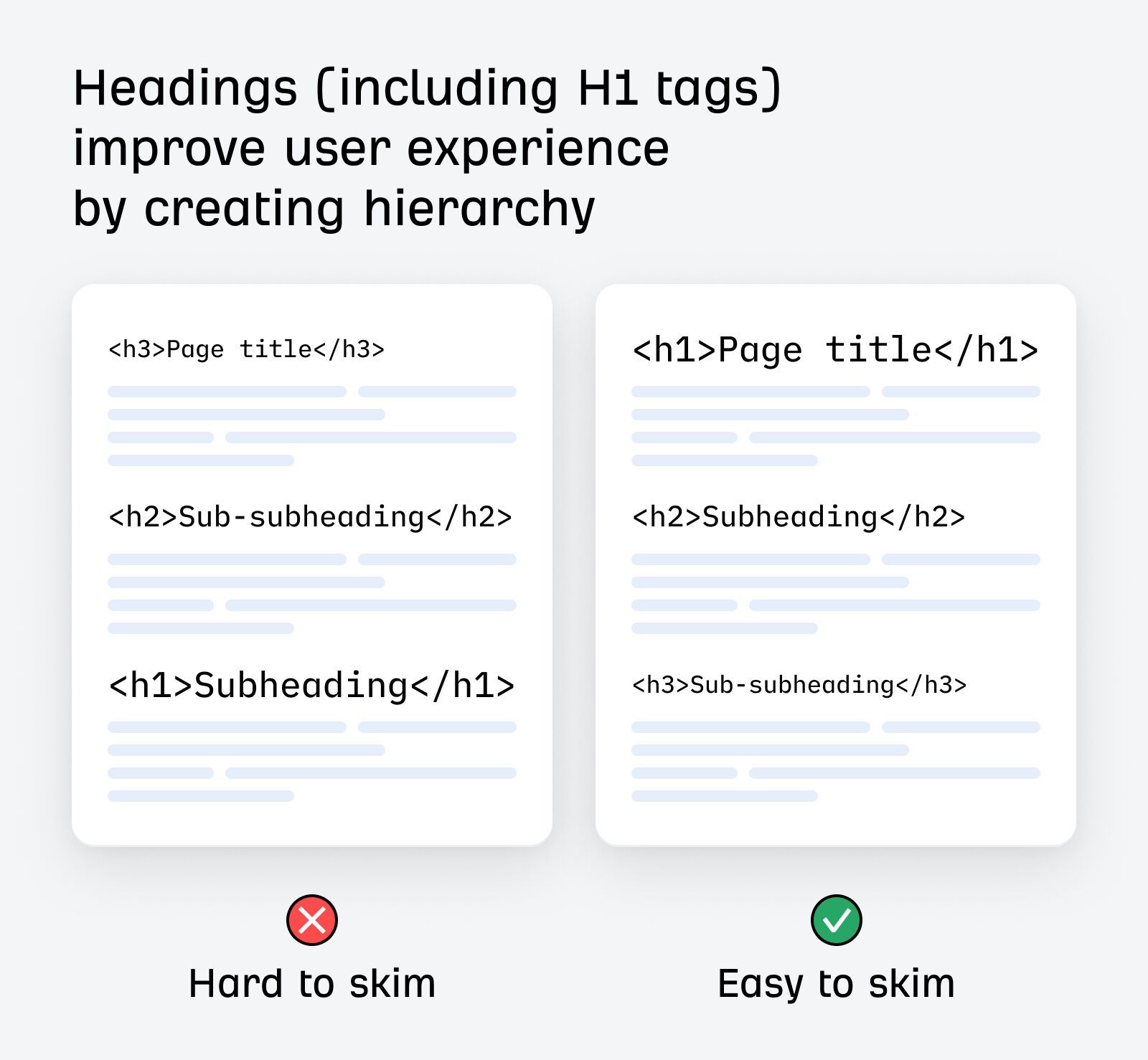

Manage your content material logically

Google’s AI makes use of a “Tree-walking algorithm”, which means it follows the precise semantic HTML construction of a webpage from high to backside—which is why well-formatted and structured content material is simpler for it to course of.

Manage your content material logically—with clear headings, subheadings, and bulleted lists.

I’m certain you’ve been doing this already anyway!

Maintain content material tight—there’s no must “chunk”

LLMs break content material into smaller “passages” (chunks) for embedding.

In response to Dan Petrovic’s findings, Chrome makes use of a “DocumentChunker Algorithm”, which solely analyzes 200-word passages.

What this implies: construction issues—every part is more likely to be retrieved in isolation.

What this doesn’t imply: “chunking” is the reply.

You don’t want to verify each part of your content material works as its personal standalone thought simply in case it will get cited.

And also you undoubtedly don’t want to write down articles like a sequence of standing updates—that’s not one thing a consumer desires to learn.

As an alternative logically group paragraphs, and develop concepts cleanly—in order that they make sense even when they get spliced.

Keep away from lengthy, rambling sections which may get reduce off or break up inefficiently.

Additionally, don’t drive redundancy in your writing—AI methods can deal with overlap.

For instance, Chrome makes use of the overlap_passages parameter to be sure that vital context isn’t misplaced throughout chunk boundaries.

So, give attention to pure circulate fairly than repeating your self to “bridge” sections—overlap is already constructed in.

Constructing content material clusters and concentrating on area of interest consumer questions could enhance your odds of being surfaced in an AI response.

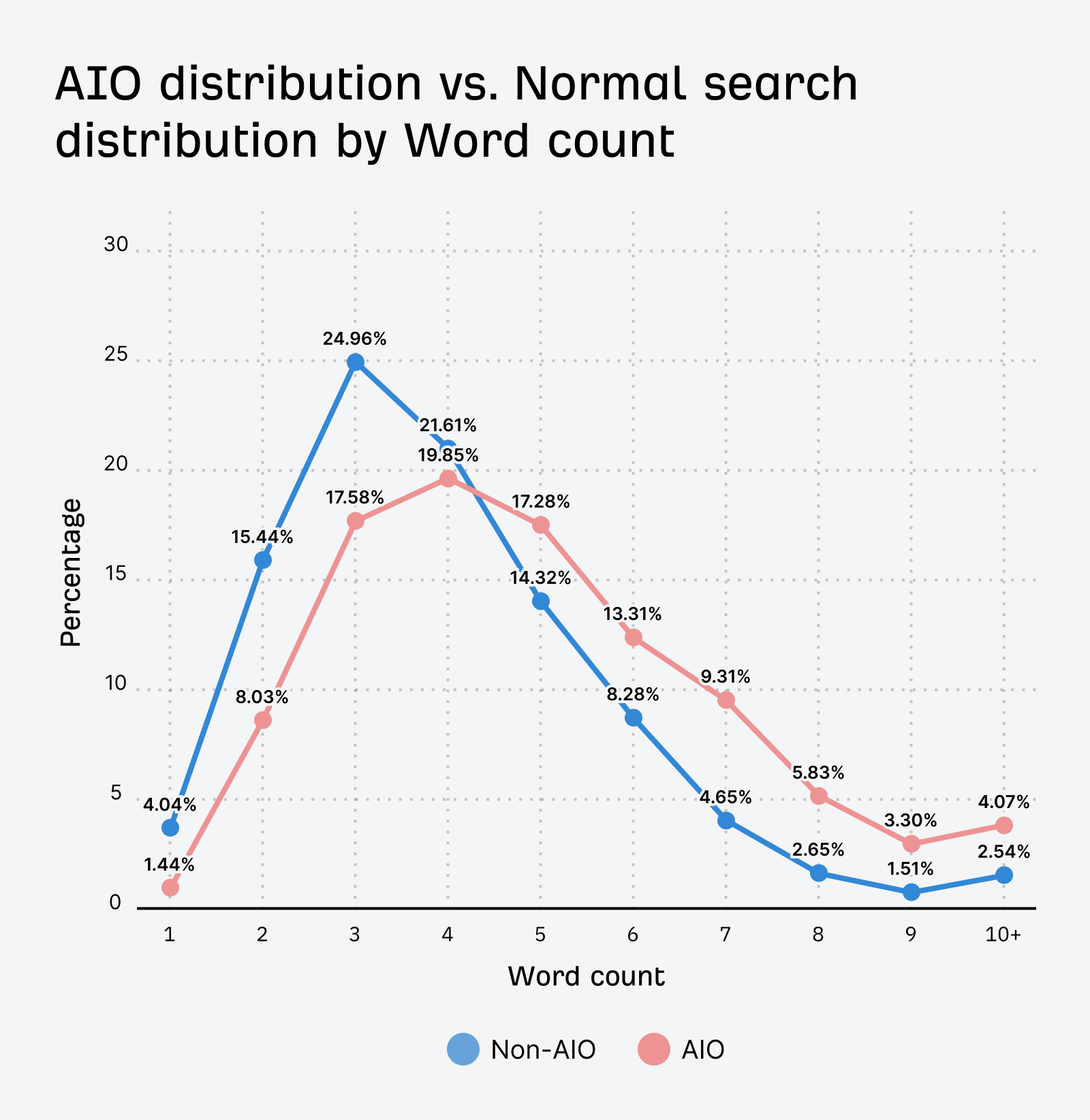

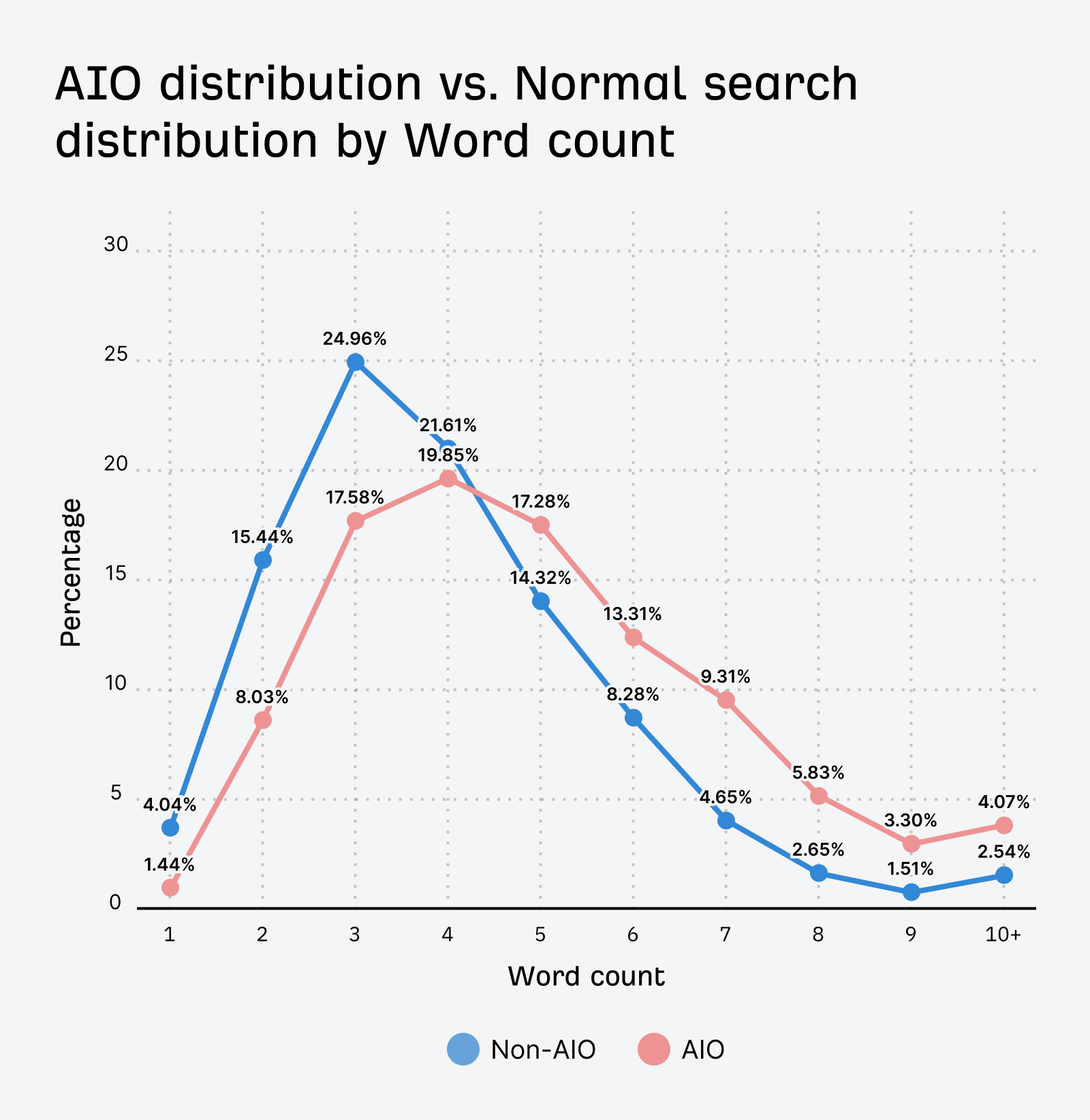

In AI assistants like ChatGPT and Gemini, prompts skew extremely long-tail.

Development Advertising and marketing Supervisor at AppSamurai, Metehan Yeşilyurt, studied ~1,800 actual ChatGPT conversations, and located the typical immediate size got here in at 42 phrases (!).

And long-tail prompts solely multiply.

AI assistants primarily “fan out” prompts into quite a few long-tail sub-queries. Then, they run these sub-queries via serps to seek out the most effective sources to cite.

Concentrating on long-tail key phrases can due to this fact enhance your odds of matching intent and profitable citations.

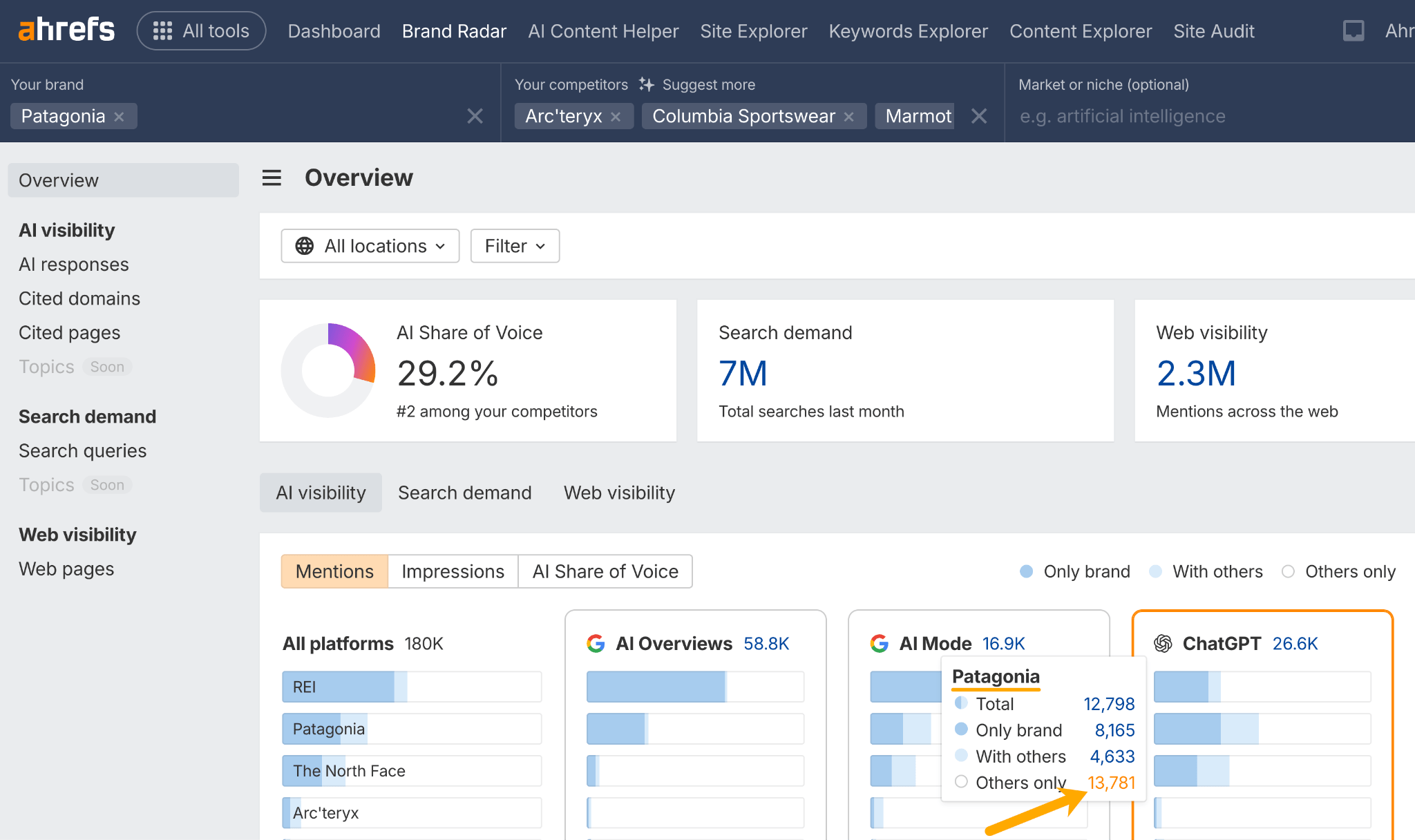

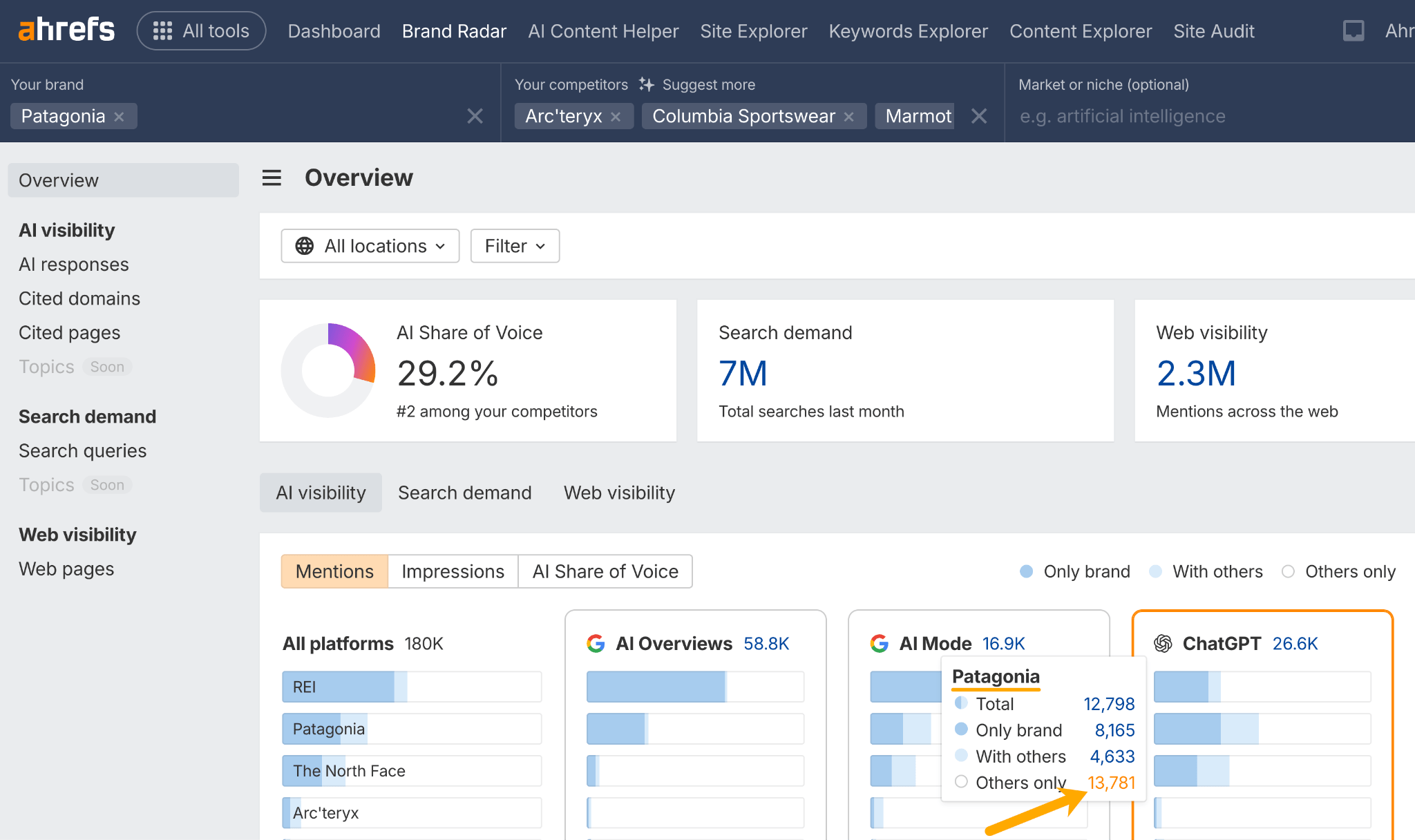

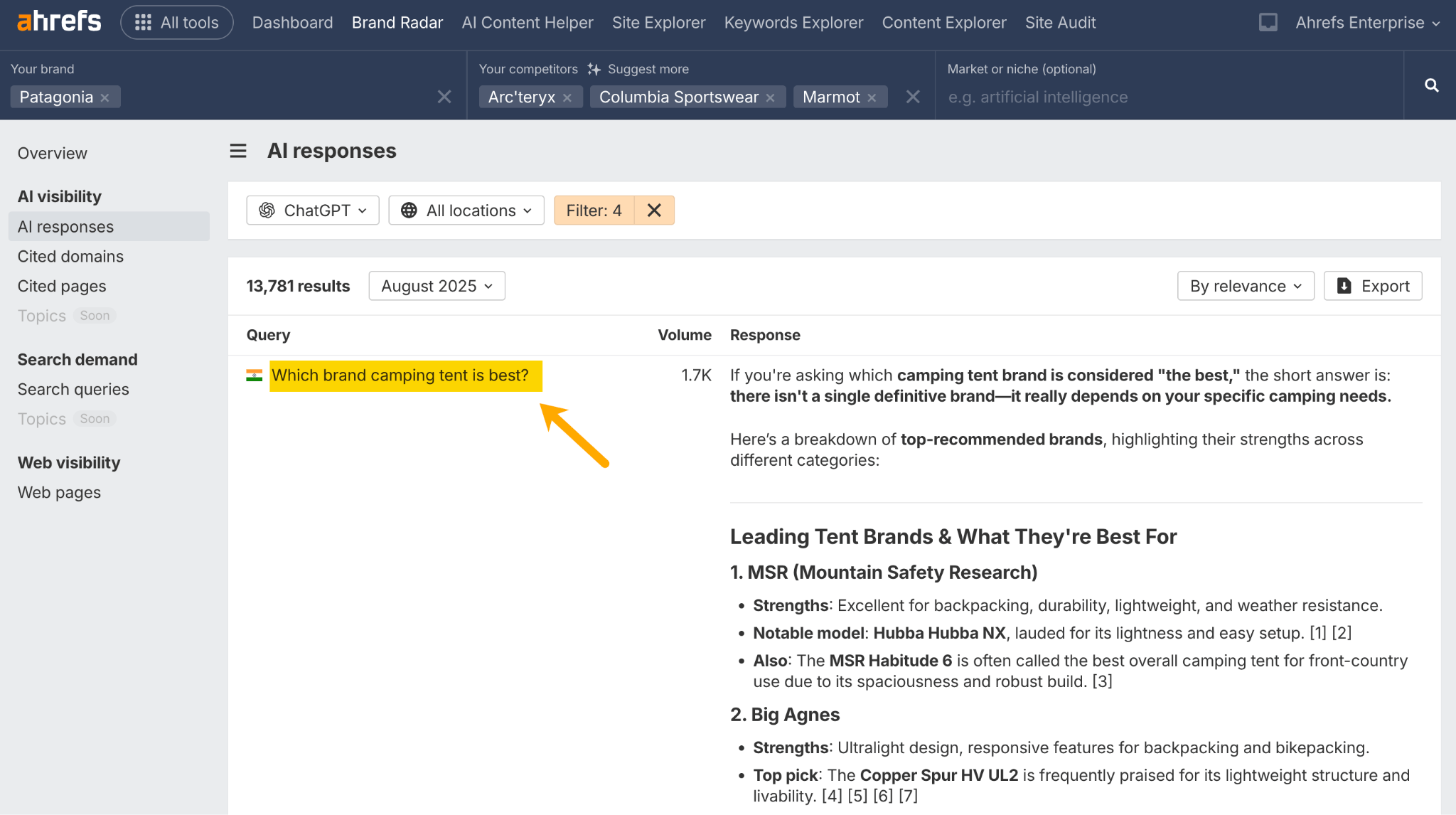

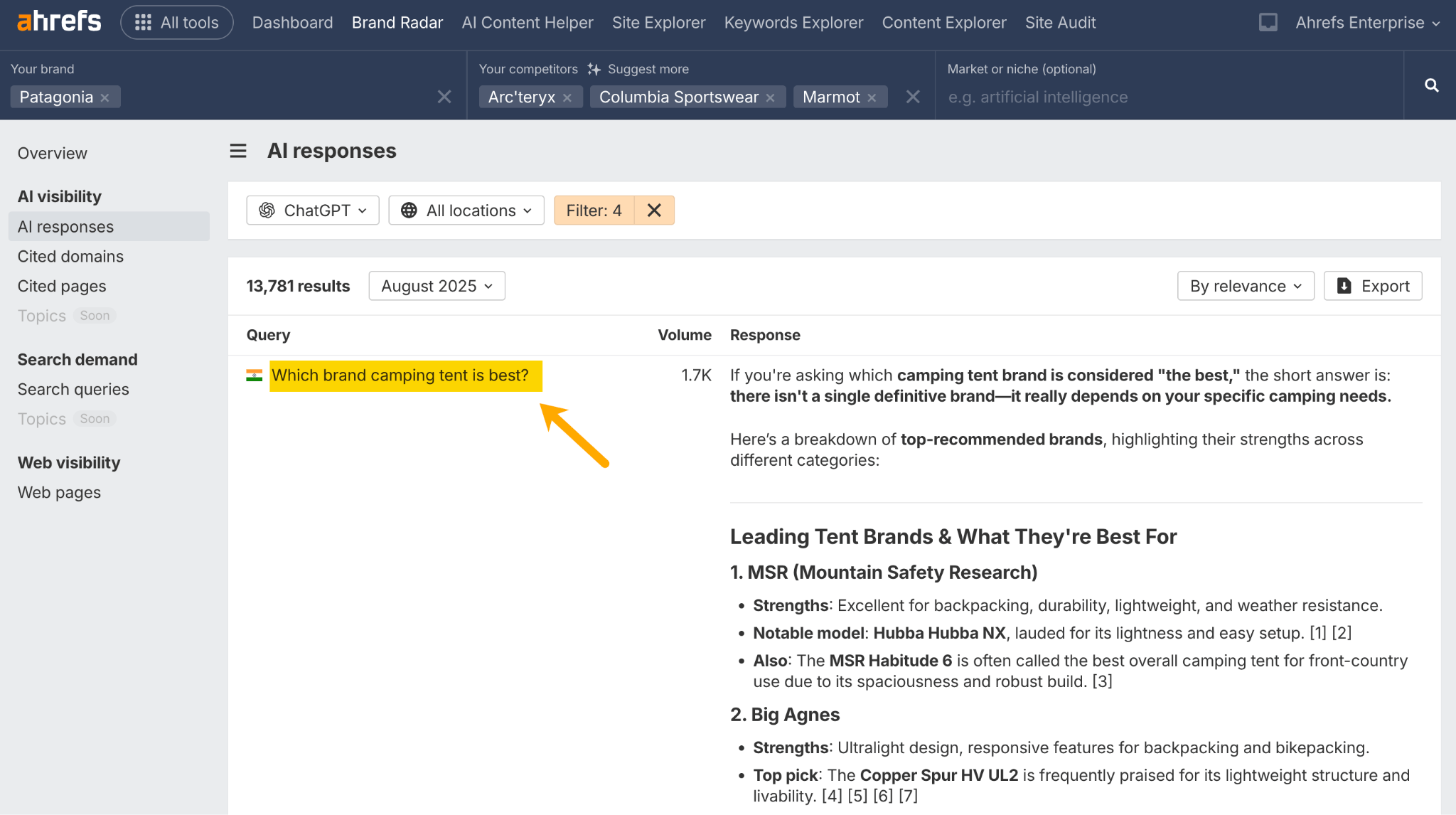

You may get long-tail key phrase concepts by performing a competitor hole evaluation in Ahrefs Model Radar.

This reveals you the prompts your opponents are seen for that you just’re not—your AI immediate hole, in the event you will.

Drop in your model and opponents, and hover over an AI assistant like ChatGPT, and click on on “Others solely”.

Then examine the returning prompts for long-tail content material concepts.

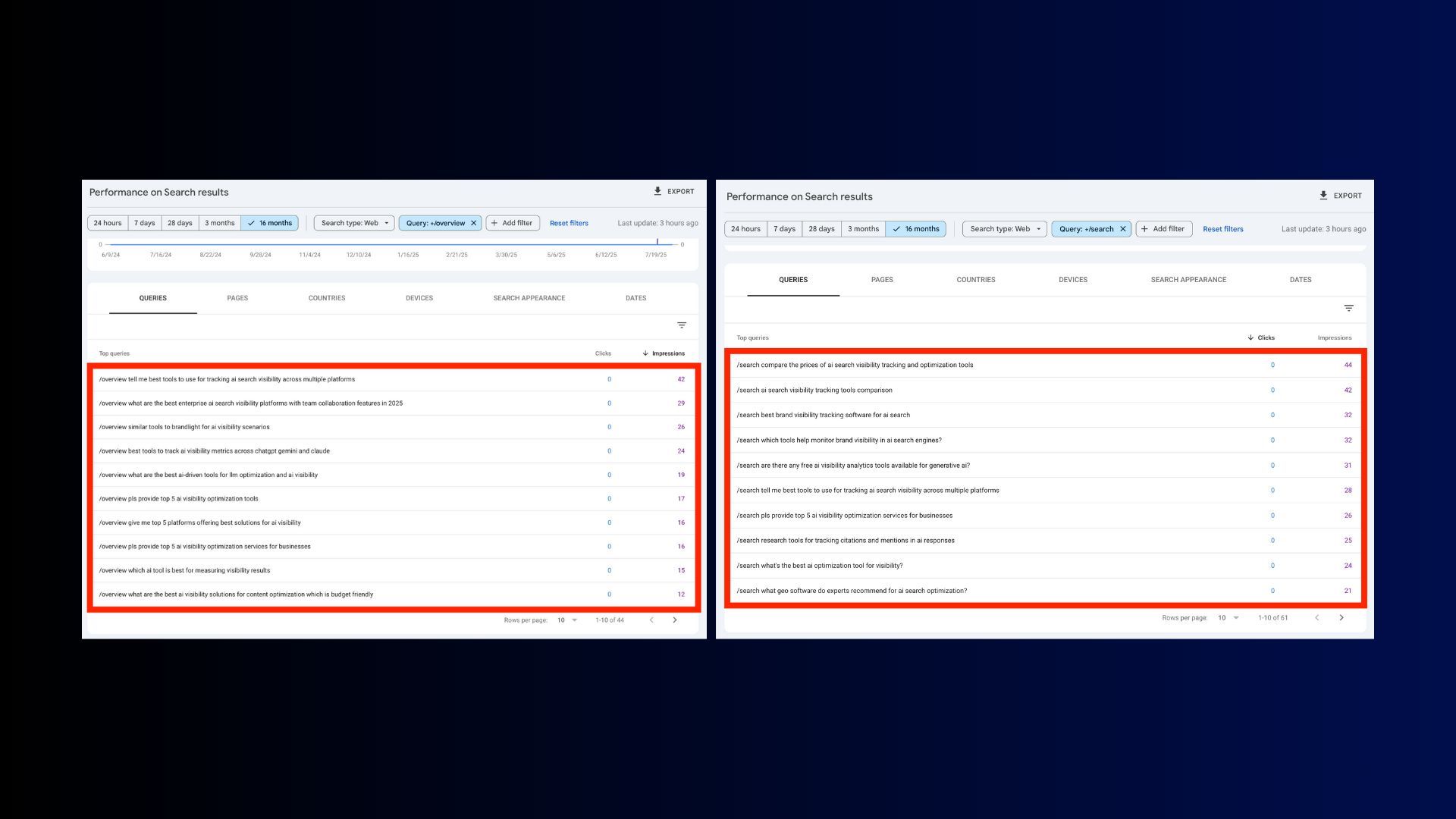

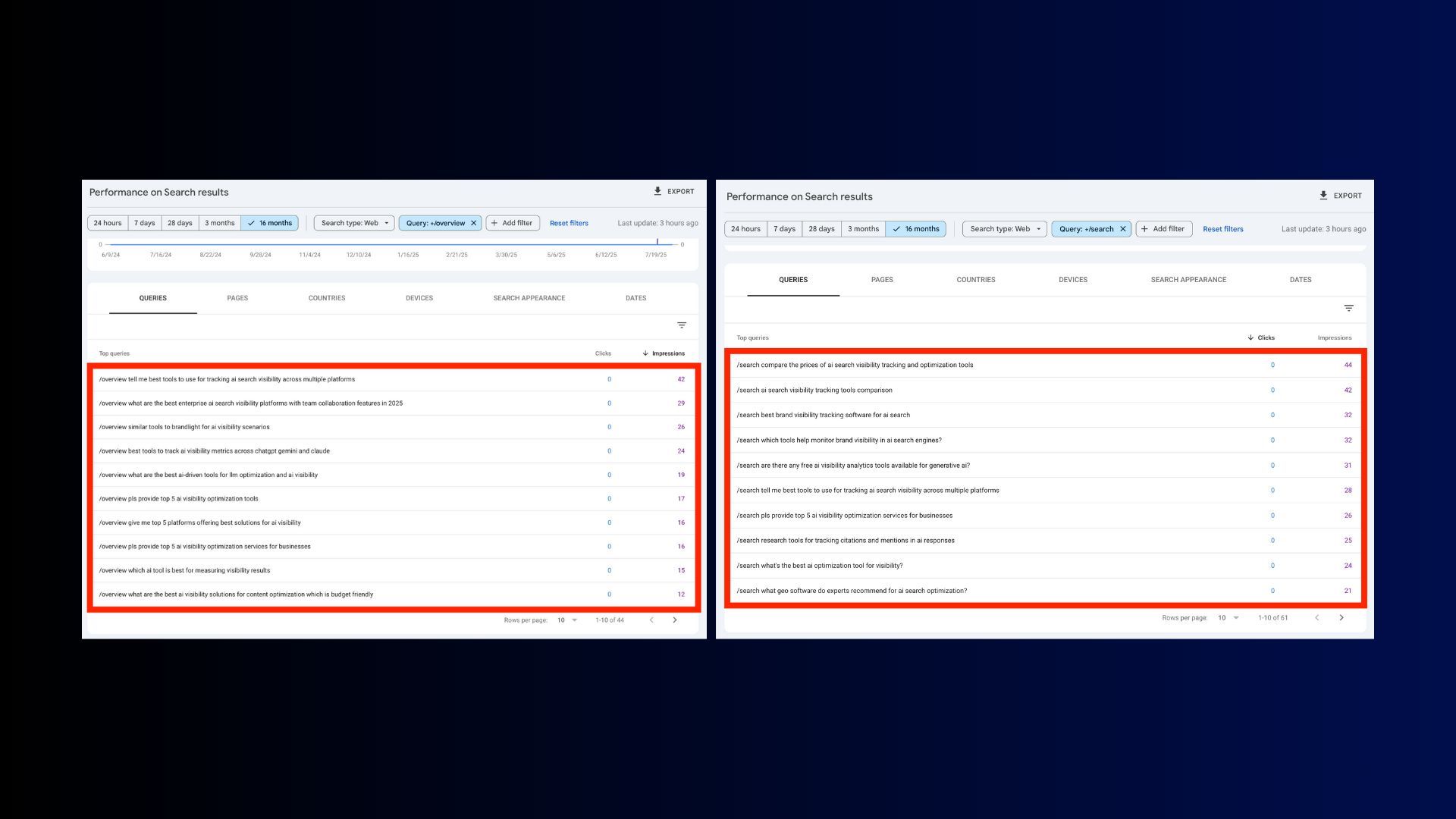

One concept by Nathan Gotch suggests that question filters in GSC containing /overview or /search reveal long-tail key phrases carried out by customers in AI Mode—so that is one other potential supply of long-tail content material concepts.

Creating content material to serve long-tail key phrases is wise. However what’s much more vital is constructing content material clusters protecting each angle of a subject—not simply single queries.

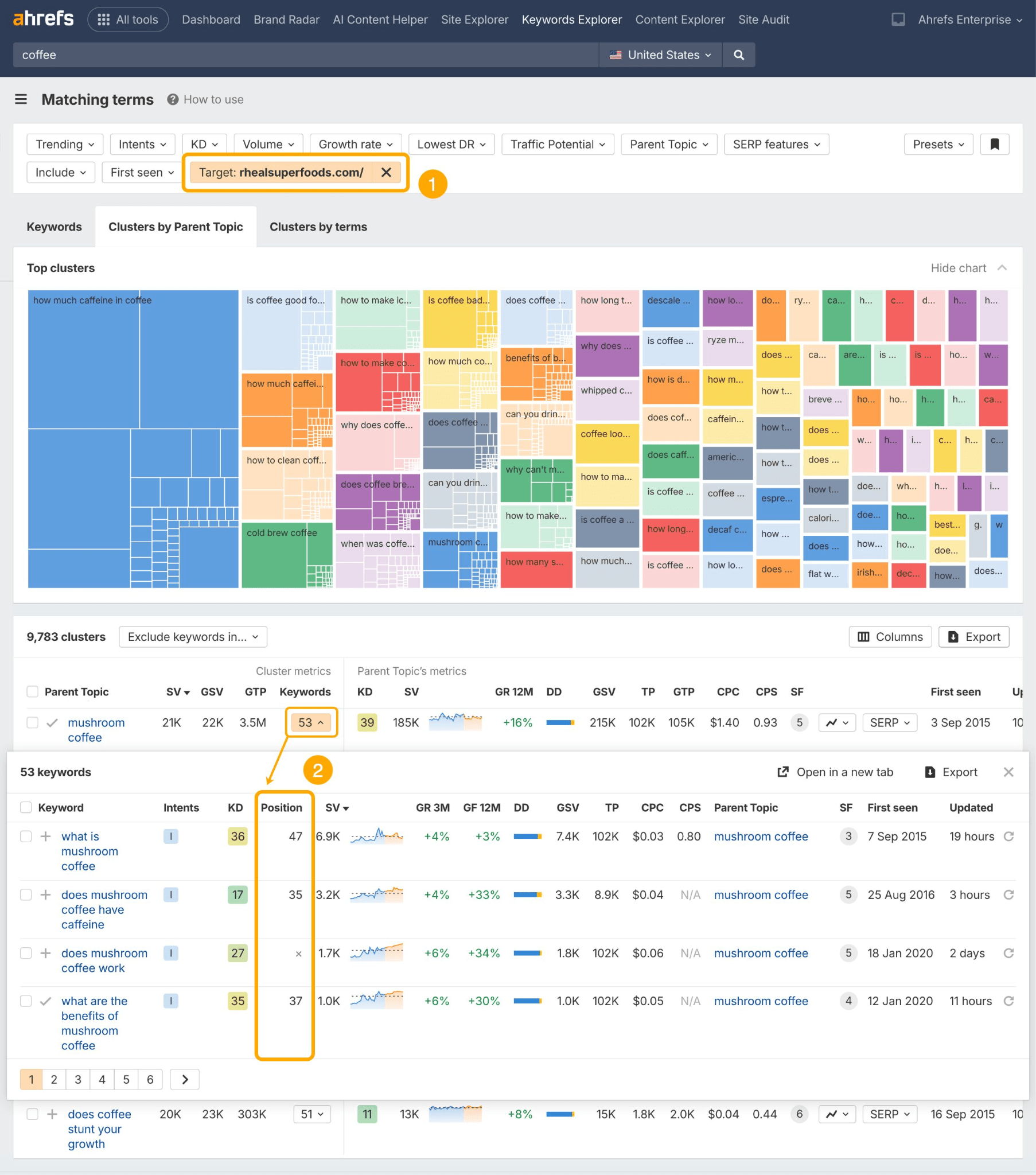

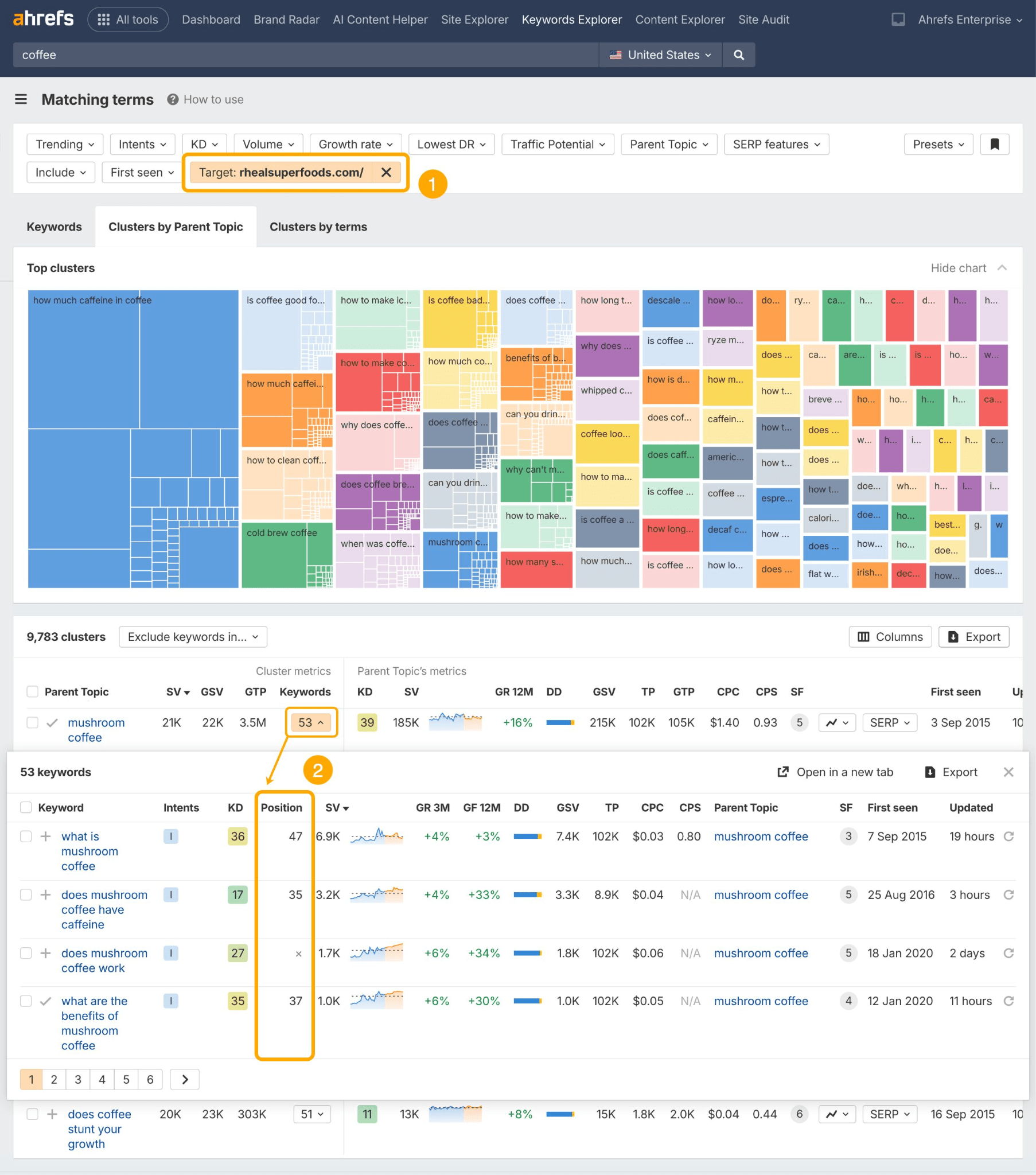

For this you should use instruments like Additionally Requested or Ahrefs Guardian Subjects in Ahrefs Key phrase Explorer.

Simply search a key phrase, head to the Matching Phrases report, and take a look at the Clusters by Guardian Matter tab.

Then hit the Questions tab for pre-clustered, long-tail queries to focus on in your content material…

To see how a lot possession you might have over present long-tail question permutations, add a Goal filter in your area.

Content material clusters aren’t new. However proof factors to them being of even better significance in LLM search.

All the issues that Google couldn’t resolve at the moment are being handed over to AI.

LLM search can deal with multi-step duties, multi-modal content material, and reasoning, making it fairly formidable for job help.

Going again to the ChatGPT analysis talked about earlier, Metehan Yeşilyurt discovered that 75% of AI prompts are instructions—not questions.

This means {that a} vital variety of customers are turning to AI for job completion.

In response, you might need to begin motion mapping: contemplating all of the potential duties your prospects will need to full that will not directly contain your model or its merchandise.

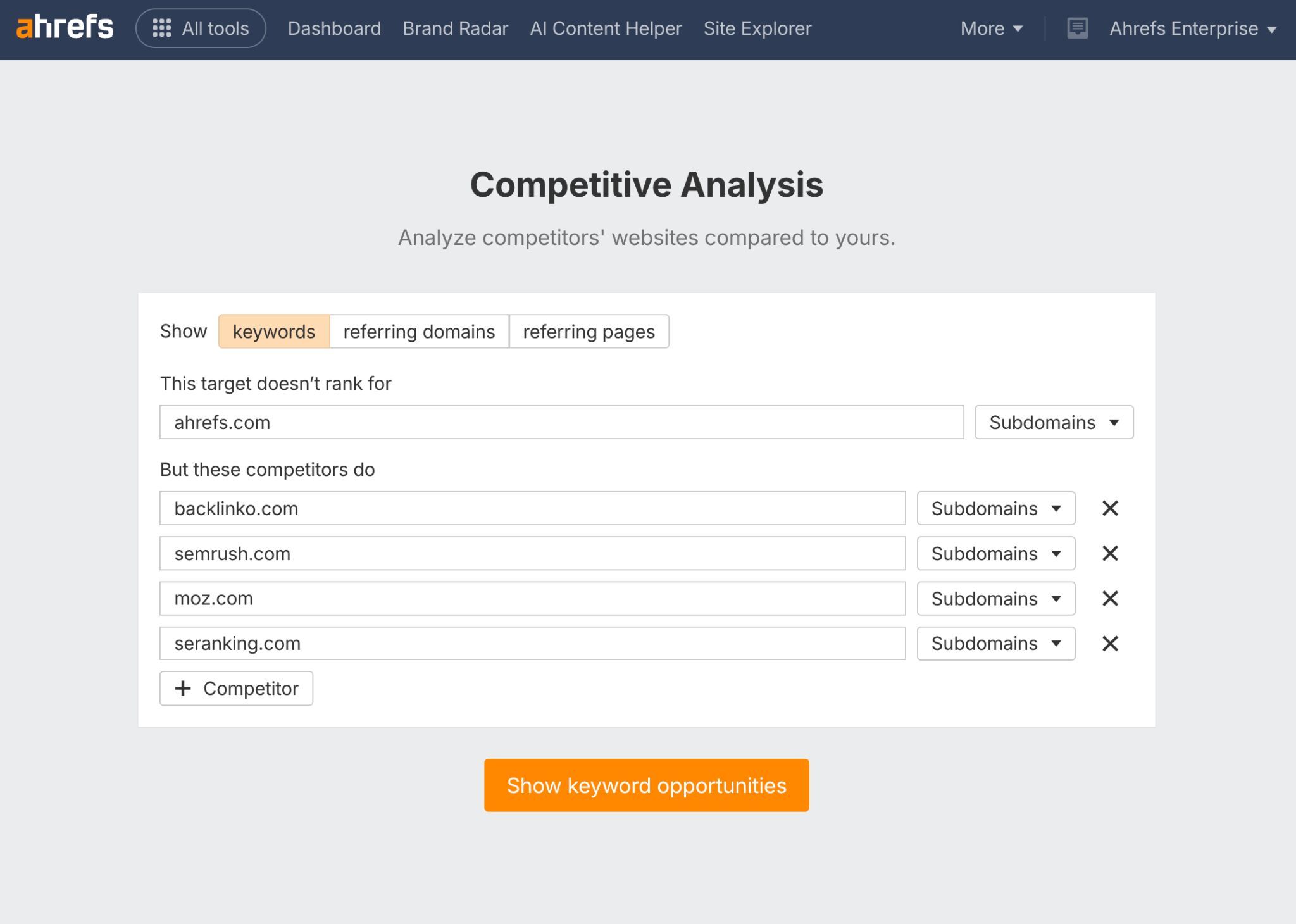

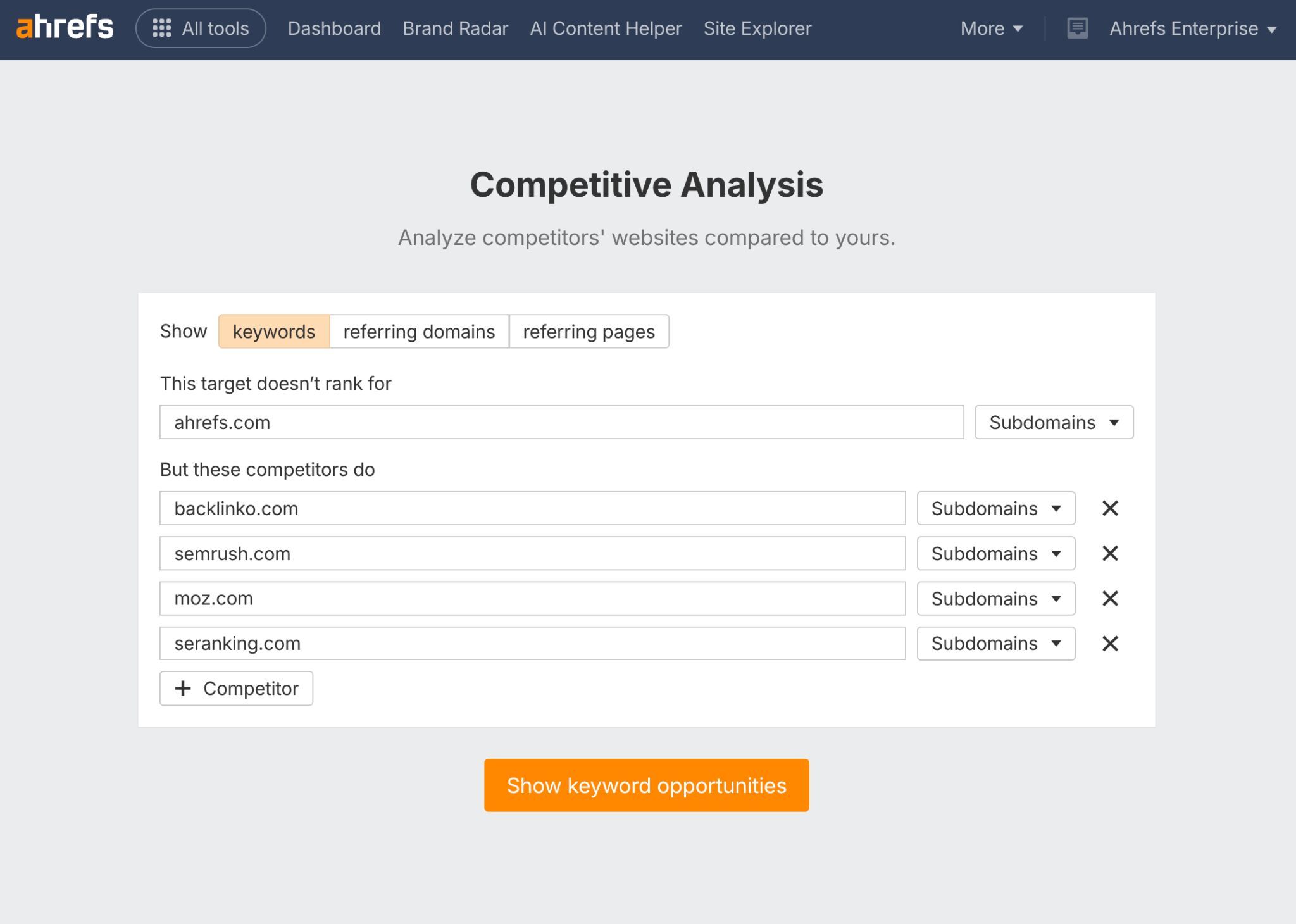

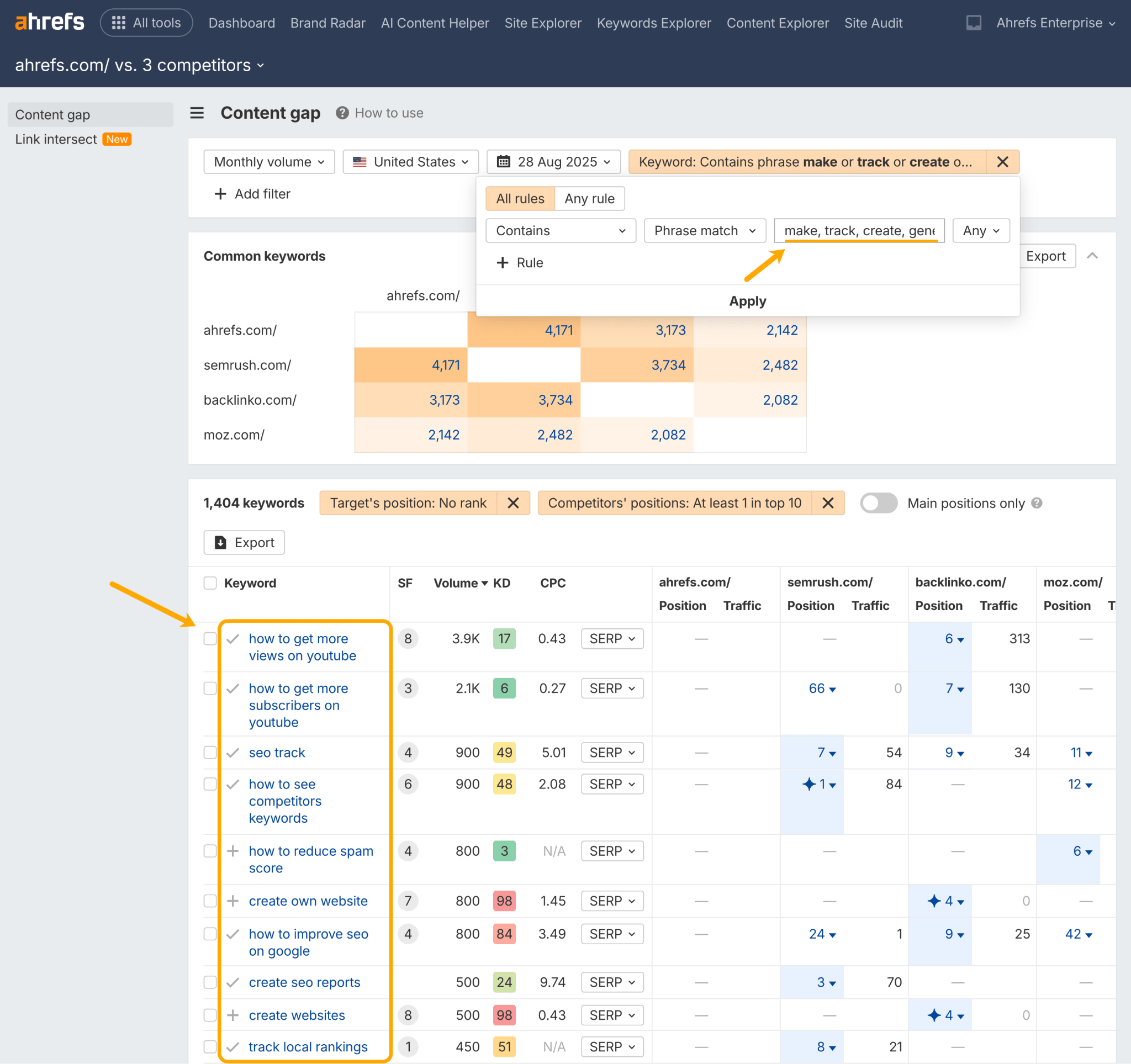

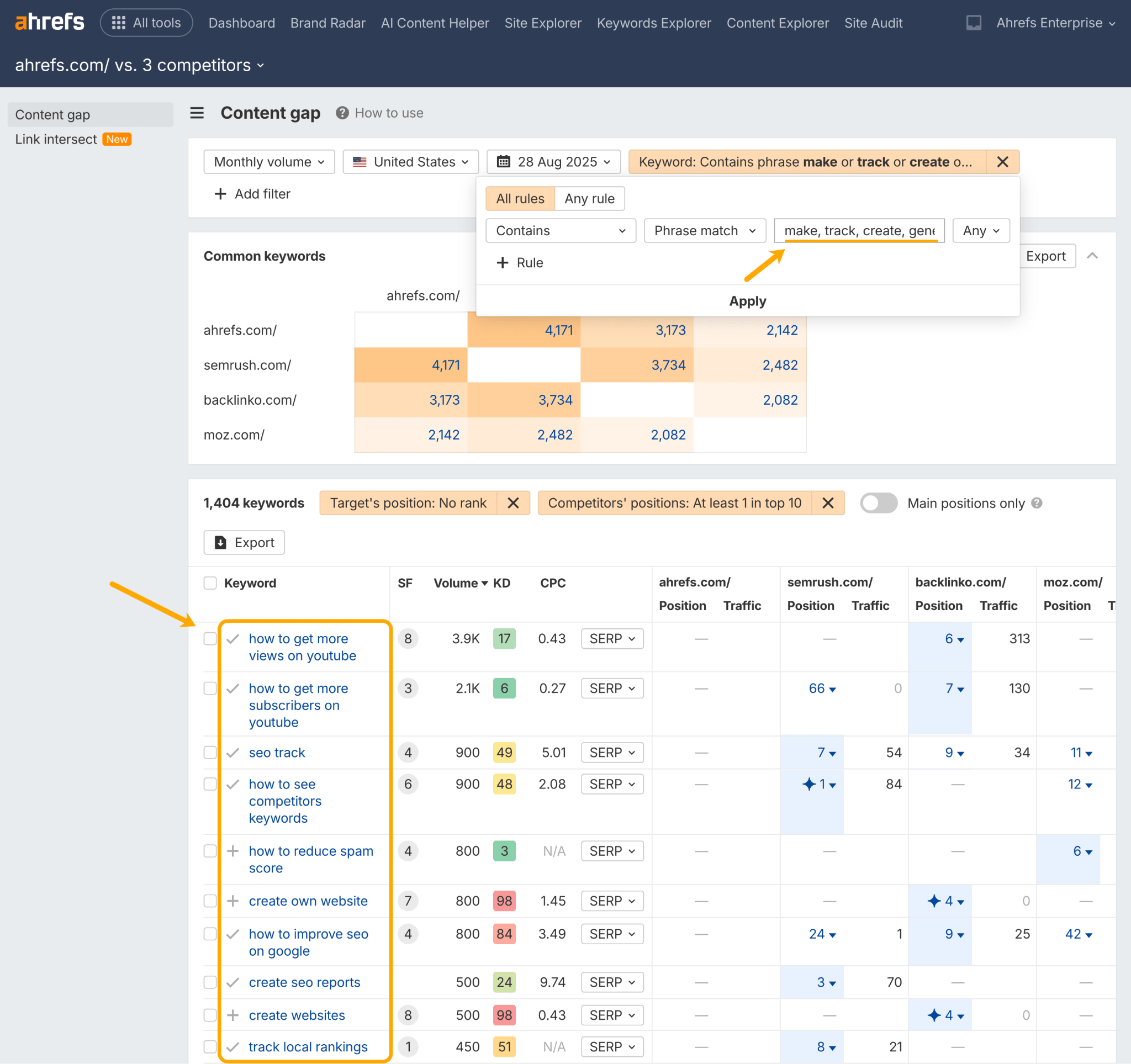

To map buyer duties, head to Ahrefs Competitor Evaluation and arrange a search to see the place your opponents are seen–however you’re not.

Then filter by related motion key phrases (e.g. “make”, “monitor”, “create”, “generate”) and query key phrases (e.g. “easy methods to” or “how can” ).

As soon as you recognize what core actions your viewers desires to take, create content material to help these jobs-to-be-done.

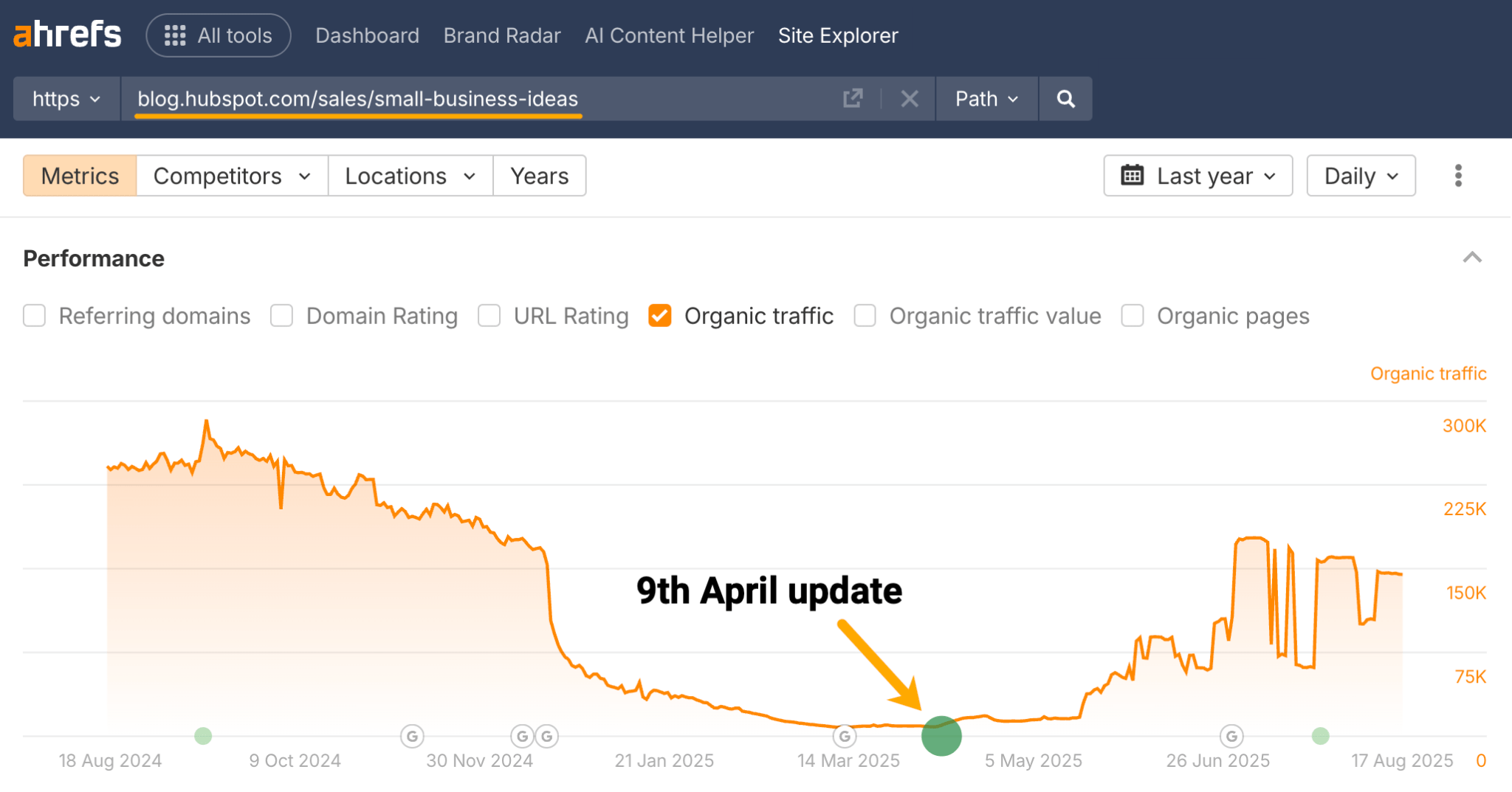

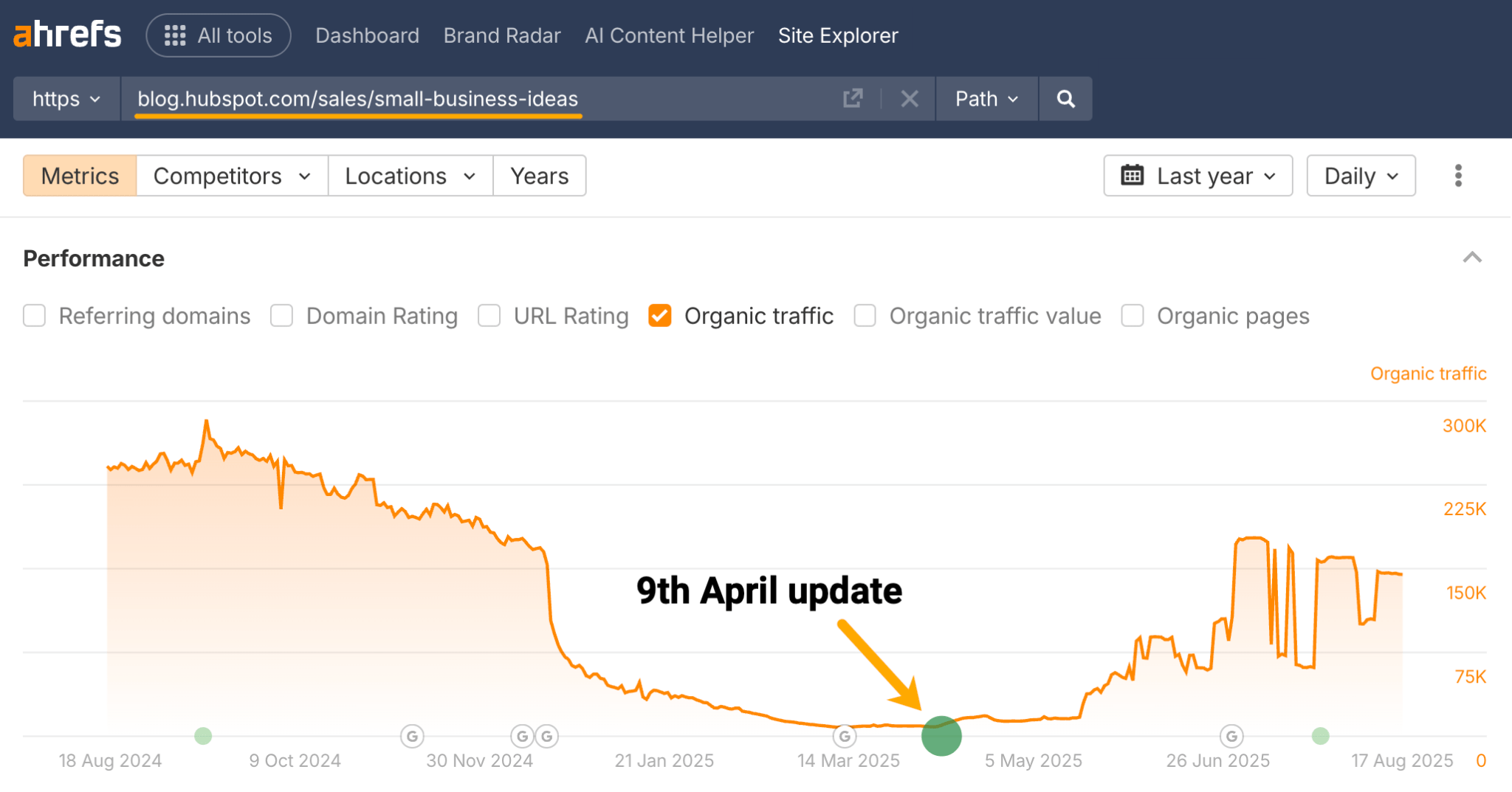

We analyzed 17 million citations throughout 7 AI search platforms, and located that AI assistants want citing brisker content material.

Content material cited in AI is 25.7% brisker than content material in natural SERPs, and AI assistants present a 13.1% desire for extra just lately up to date content material.

ChatGPT and Perplexity specifically prioritize newer pages, and have a tendency to order their citations from latest to oldest.

Why does freshness matter a lot? As a result of RAG (retrieval-augmented technology) often kicks in when a question requires contemporary data.

If the mannequin already “is aware of” the reply from its coaching knowledge, it doesn’t want to look.

However when it doesn’t—particularly with rising topics—it appears for the newest data obtainable.

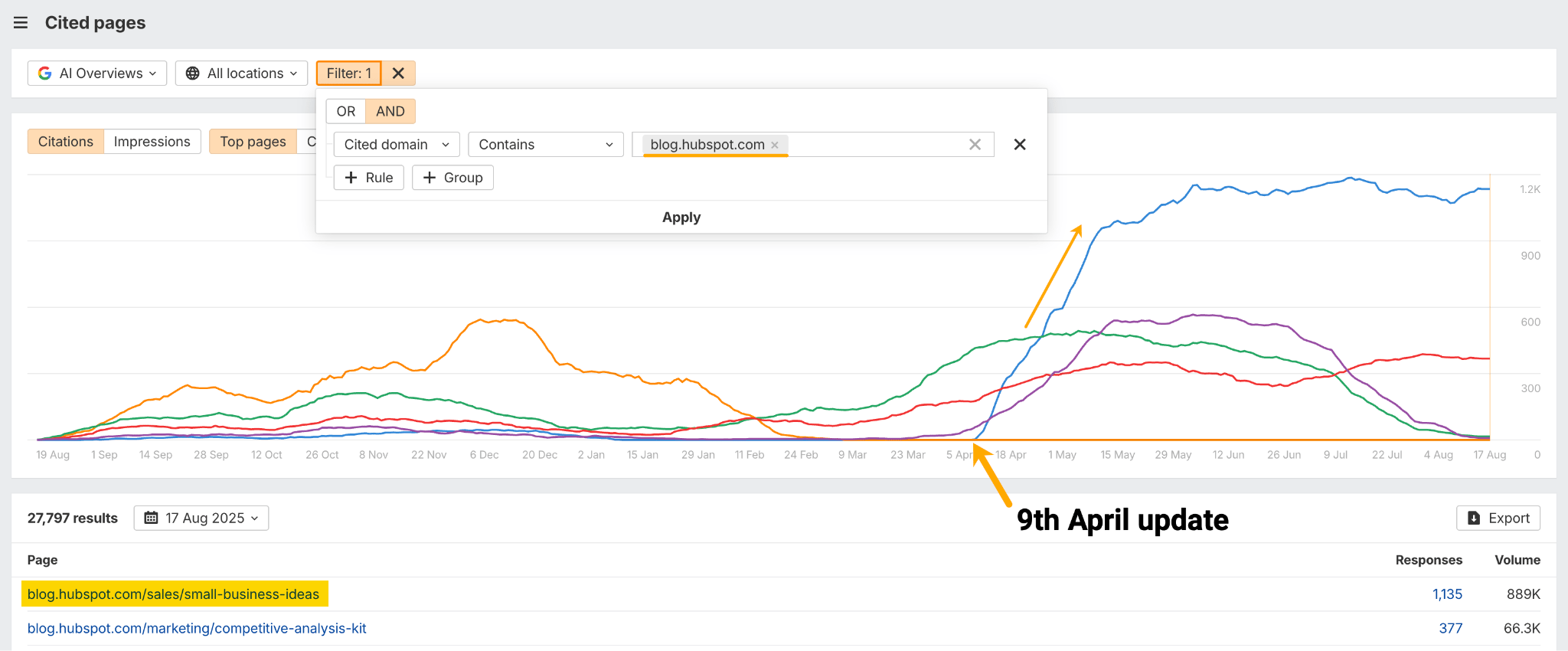

Within the instance under, Hubspot sees 1,135 new AI Overview mentions from a single content material replace, based mostly on Ahrefs Web site Explorer knowledge.

The article is now their most cited weblog in AI Overviews, in response to Ahrefs Model Radar.

Our analysis means that maintaining your content material up to date can enhance its attraction to AI engines on the lookout for the newest data.

To your content material to be cited in AI solutions, you must enable AI bots to crawl it.

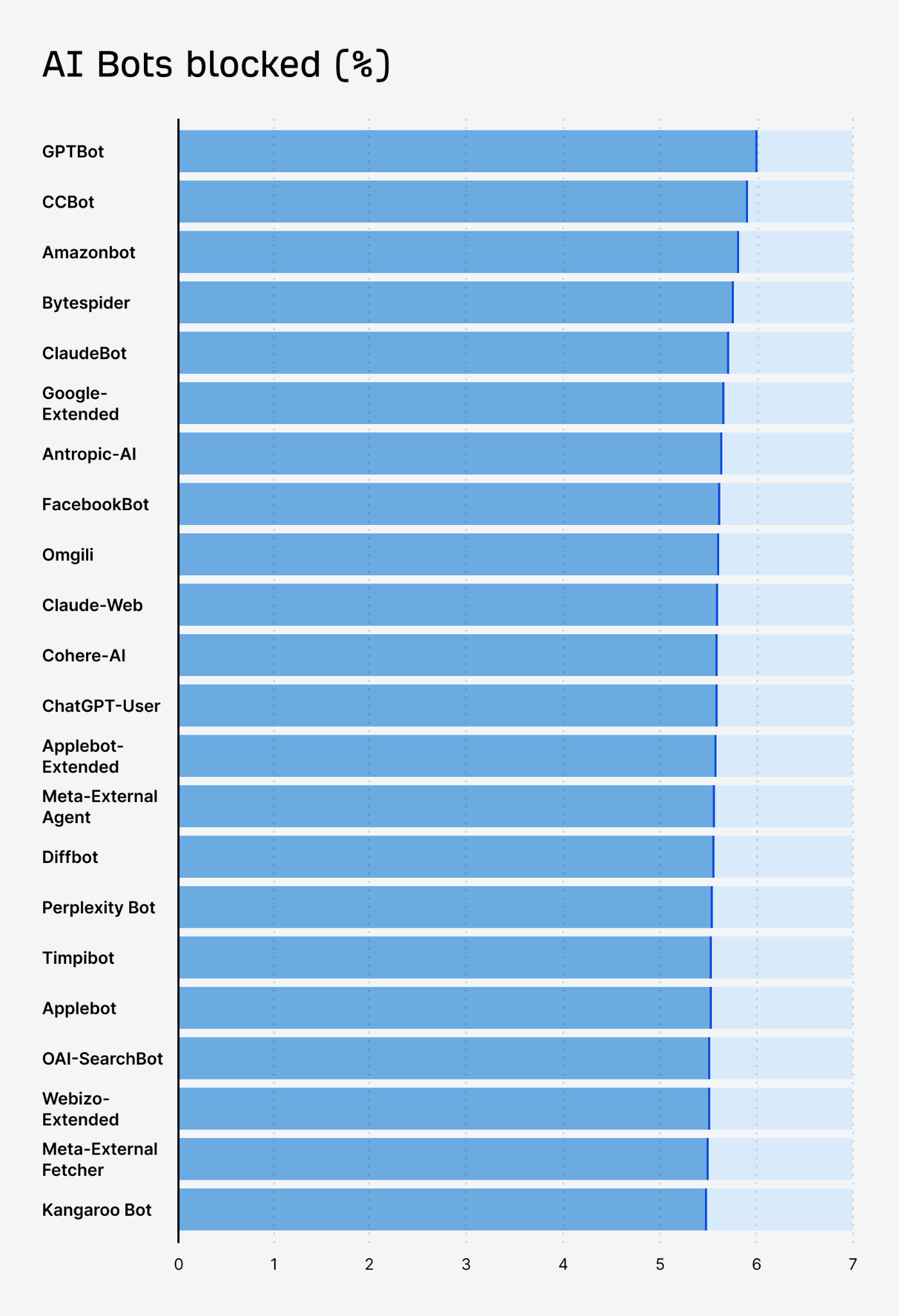

A rising variety of websites have began blocking AI scrapers.

Going by our personal analysis, ~5.9% of all web sites disallow OpenAI’s GPTBot over considerations about knowledge use or useful resource pressure.

Whereas that’s comprehensible, blocking may also imply forfeiting future AI visibility.

In case your purpose is to have ChatGPT, Perplexity, Gemini and different AI assistants point out your model, double-check your robots.txt and firewall guidelines to ensure you’re not by accident blocking main AI crawlers.

Be sure to let the reputable bots index your pages.

This fashion, your content material might be a part of the coaching or reside looking knowledge that AI assistants draw on—supplying you with a shot at being cited when related queries come up.

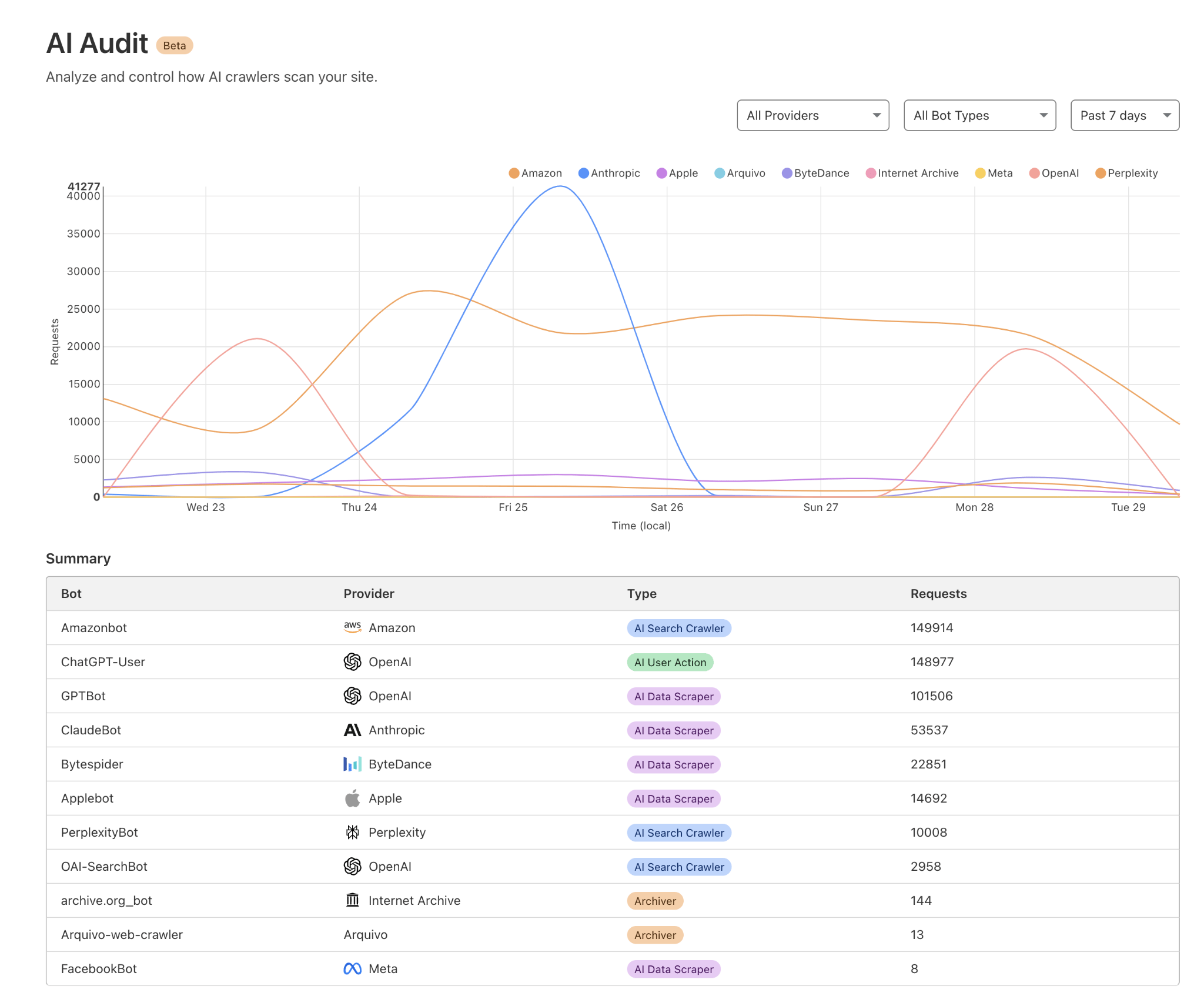

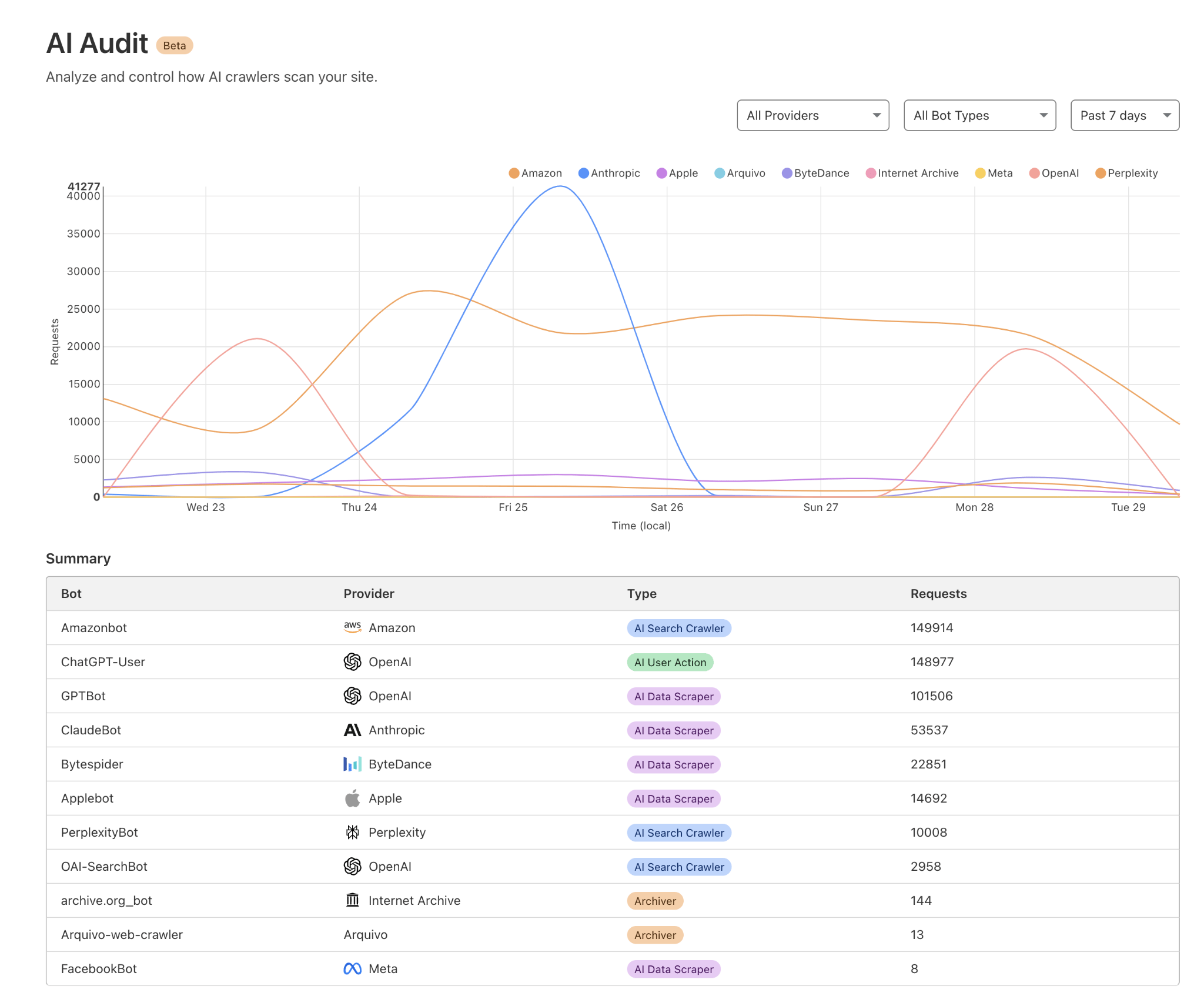

You’ll be able to verify which AI bots are accessing your website by checking your server logs, or utilizing a instrument like Cloudflare AI audit.

The highest-cited domains fluctuate lots between completely different LLM search surfaces. Being a winner in a single doesn’t assure presence in others.

In reality, among the many high 50 most-mentioned domains throughout Google AI Overviews, ChatGPT, and Perplexity, we discovered that solely 7 domains appeared on all three lists.

Meaning a staggering 86% of the sources had been distinctive to every assistant.

Google leans by itself ecosystem (e.g. YouTube), plus user-generated content material—particularly communities like Reddit and Quora.

ChatGPT favors publishers and media partnerships—notably information shops like Reuters and AP—over Reddit or Quora.

And Perplexity prioritizes numerous sources, particularly world and area of interest websites—e.g. well being or region-specific websites like tuasaude or alodokter.

There’s no one-size-fits-all quotation technique. Every AI assistant surfaces content material from completely different websites.

If you happen to solely optimize for Google rankings, you would possibly dominate in AI Overviews however have much less of a presence in ChatGPT.

On the flip facet, in case your model is picked up in information/media it would present up in ChatGPT solutions—even when its Google rankings lag.

In different phrases, it’s price testing completely different methods for various LLMs.

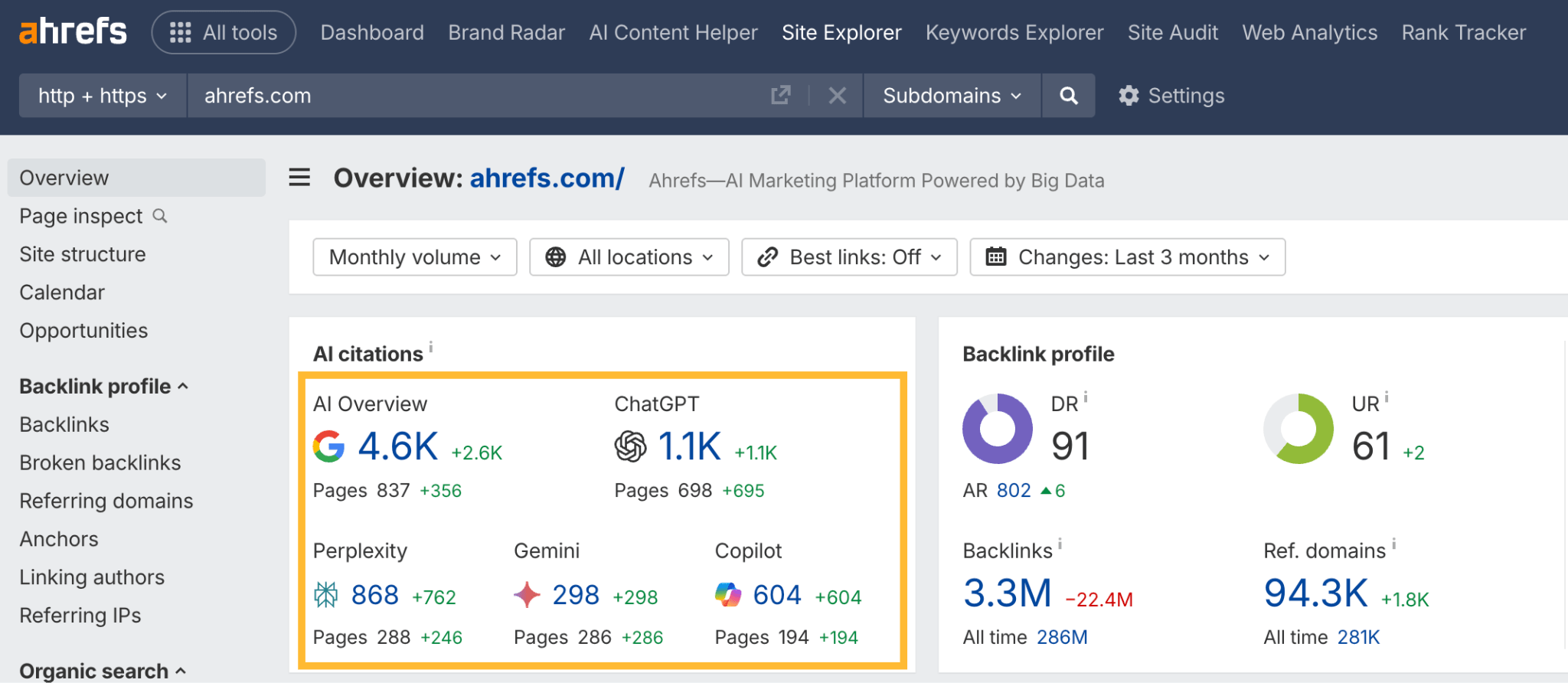

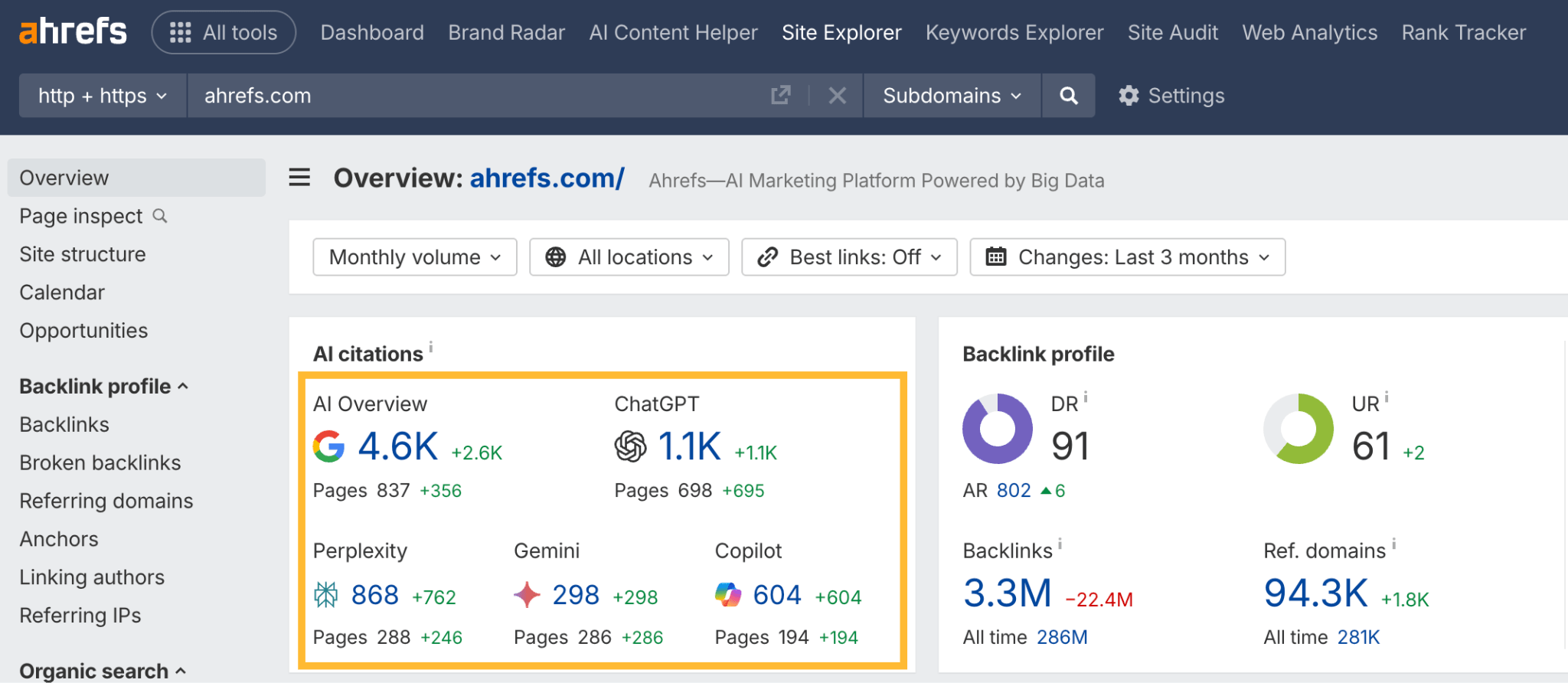

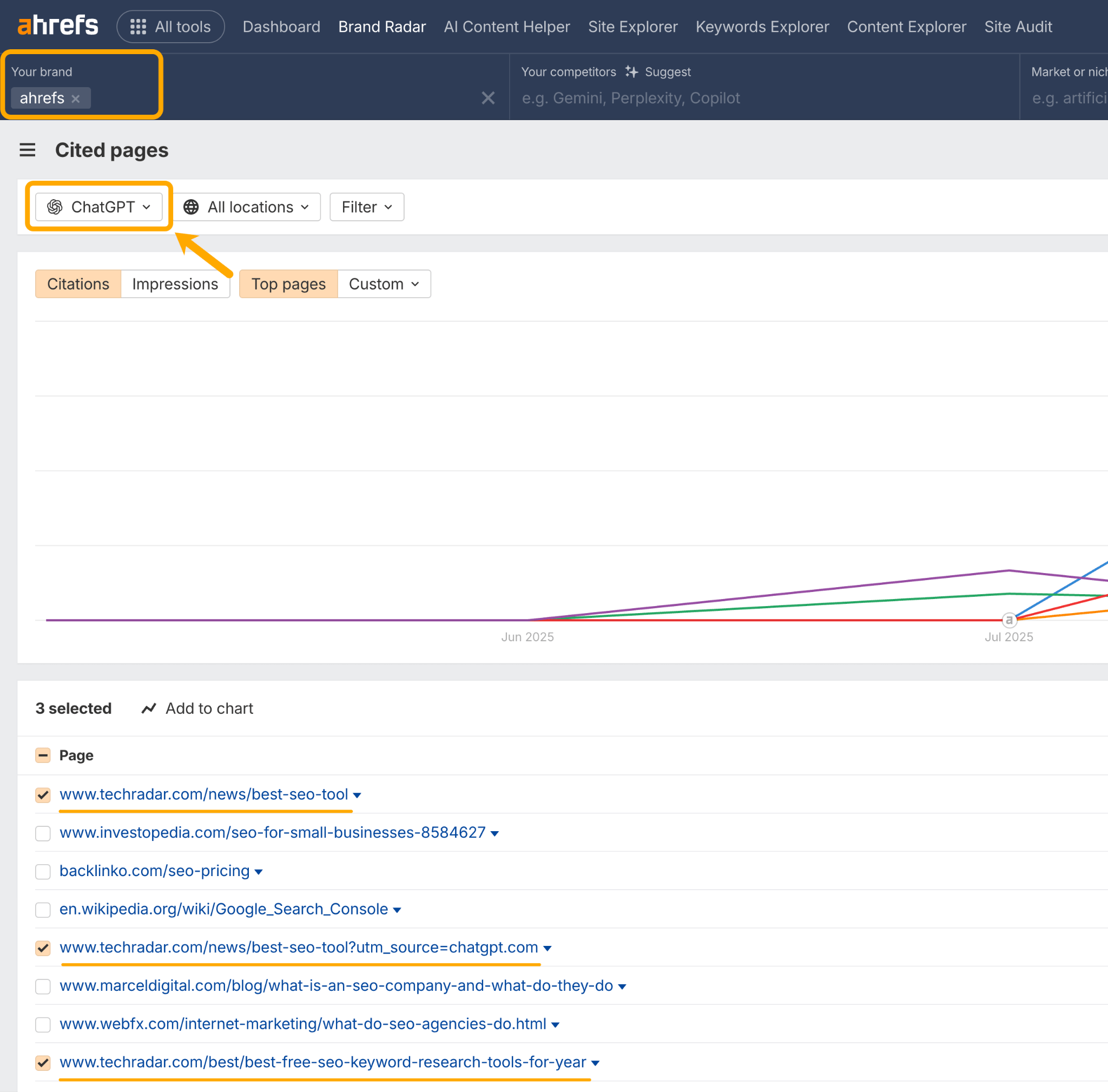

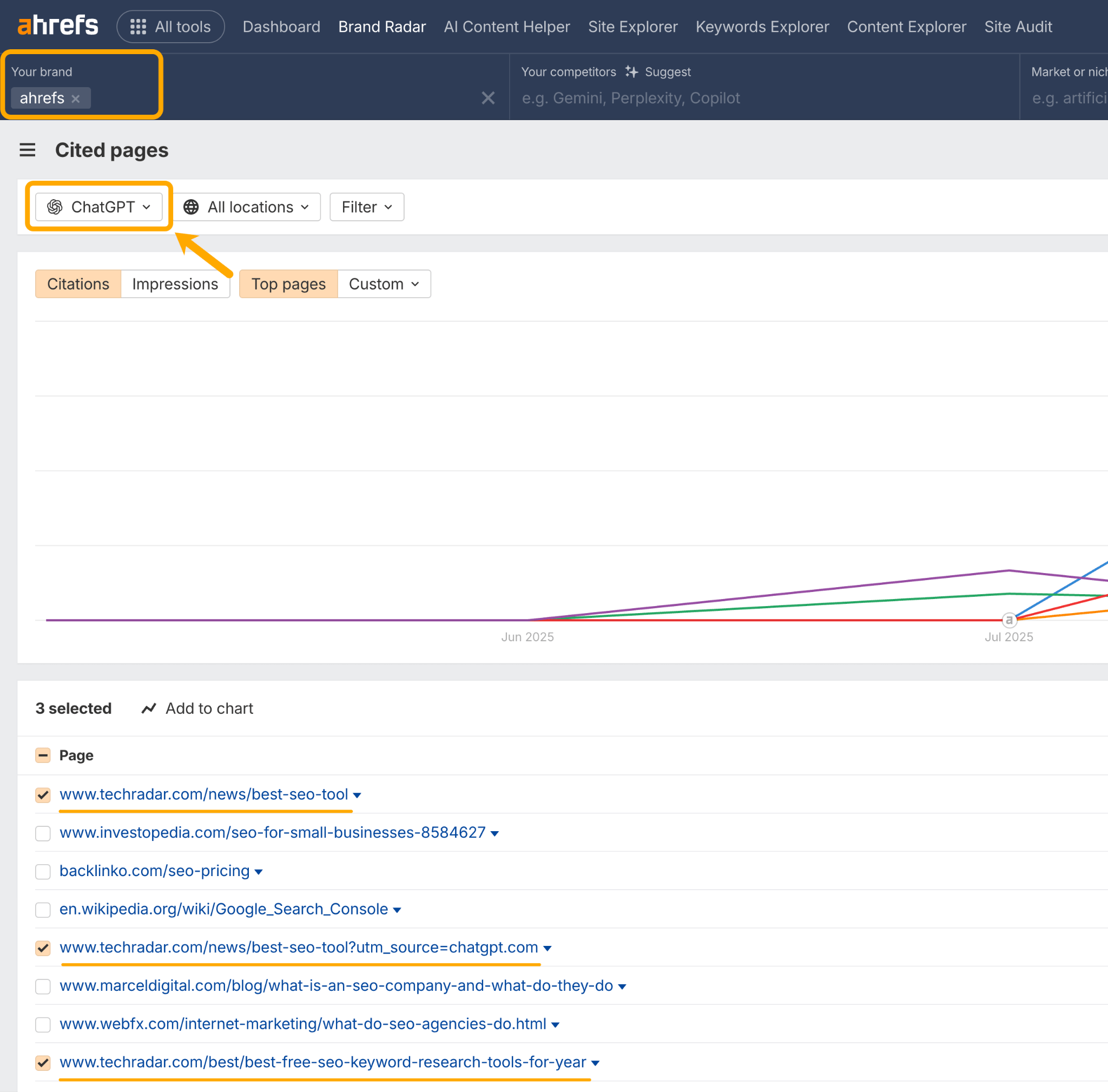

You need to use Ahrefs to see how your model seems throughout Perplexity, ChatGPT, Gemini, and Google’s AI search options.

Simply plug your area into Web site Explorer and have a look at the top-level AI quotation rely within the Overview report.

Then do a deeper dive within the Cited Pages report of Model Radar.

It will provide help to examine the completely different websites and content material codecs most well-liked by completely different AI assistants.

For instance, mentions of Ahrefs in AI Overviews have a tendency to tug from Zapier through “Finest” instrument lists.

Whereas in ChatGPT, we’re talked about extra in Tech Radar “Finest” instrument lists.

And in Perplexity our high opponents are controlling the narrative with “vs” content material, “evaluations”, and “instrument” lists.

With this data, we can:

- Maintain Zapier writers conscious of our product developments, in hopes that we’ll proceed being beneficial in future instrument guides, to drive AI Overview visibility.

- Ditto for Tech Radar, to earn constant ChatGPT visibility.

- Create/optimize our personal variations of the competitor content material that’s being drawn into Perplexity, to take again management of that narrative.

Closing ideas

Numerous this recommendation could sound acquainted—as a result of it’s largely simply search engine optimization and model advertising and marketing.

The identical components that drive search engine optimization—authority, relevance, freshness, and accessibility—are additionally what make manufacturers seen to AI assistants.

And tons of current developments simply show it: ChatGPT has just lately been outed for scraping Google’s search outcomes, GPT-5 is leaning closely on search fairly than saved information, and LLMs are shopping for up search engine hyperlink graph knowledge to assist weight and prioritize their responses.

By that measure, search engine optimization may be very a lot not lifeless—the truth is it’s doing quite a lot of the heavy lifting.

So, the takeaway is: double down on confirmed search engine optimization and brand-building practices in the event you additionally need AI visibility.

Generate high-quality model mentions, create structured and related content material, preserve it contemporary, and ensure it may be crawled.

As LLM search matures, we’re assured these core rules will preserve you seen.