Sci-Fi Writer: In my e book, I invented the Torment Nexus as a cautionary story.

Tech Firm: In the end, now we have created the Torment Nexus from basic sci-fi novel Don’t Create The Torment Nexus.

So goes one of many actually excellent social media posts of our period. Final week, regulation college students on the College of North Carolina Faculty of Legislation had a chance to check out the authorized career’s equal of the Torment Nexus with a mock trial inserting AI chatbots — particularly ChatGPT, Grok, and Claude — within the function of jurors deciding the destiny of an accused defendant. For all of the faults of the trendy jury system, ought to we change it with an algorithm ready on the behest of a moron who desires to rewrite historical past to verify his private bot injects an apart about “white genocide” into each recipe request?

Because it seems, the reply remains to be no. No less than as a 1-to-1 alternative.

The experiment centered on a mock theft case pursuant to the make-believe “AI Legal Justice Act of 2035.” Below the watchful eye of Professor Joseph Kennedy, serving because the decide, regulation college students placed on the case of Henry Justus, an African American highschool senior charged with theft. The bots obtained a real-time transcript of the proceedings after which, like an unholy episode of Decide Judy: The Singularity Version, the algorithmic jurors deliberated.

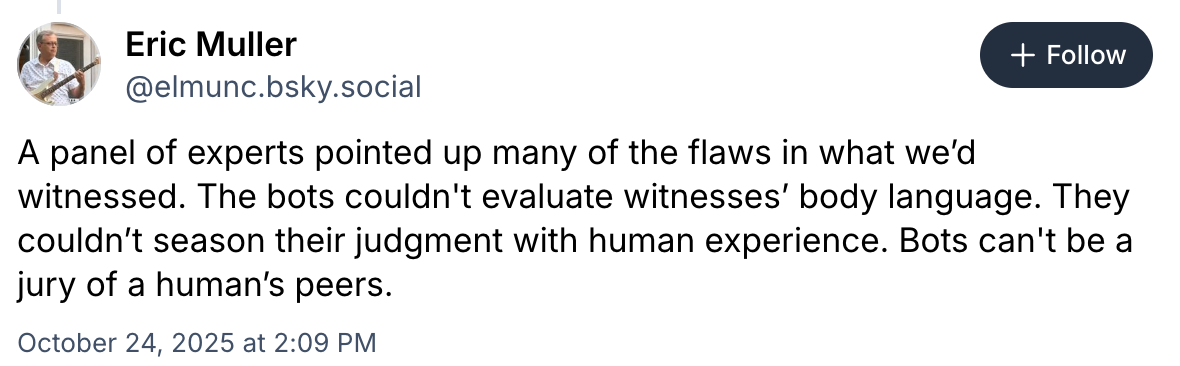

Professor Eric Muller left with some considerations.

The concept that robots can remedy the justice system’s bias — and save the federal government $15/day per juror within the course of — is the form of Silicon Valley pipe dream that generates one other spherical of funding to be heaped on the capex hearth. Enterprise Capitalists and tech bros could market on “disrupting empathy” or no matter, however we’re simply swapping one bias for an additional: human for algorithmic, emotional for opaque, private for company. To date, the robots as an entire have confirmed environment friendly vectors of implicit bias, taking the unconscious biases of their designers and the coaching knowledge they’re given and spitting it again with a misleading coat of false neutrality.

Besides Grok, after all, which is continually being tinkered with to raised exhibit express and very aware bias.

And there’s this:

Because it’s Claude, I assume it bought to “Women and Gents of the–” and simply threw up a “message will exceed the size restrict” warning.

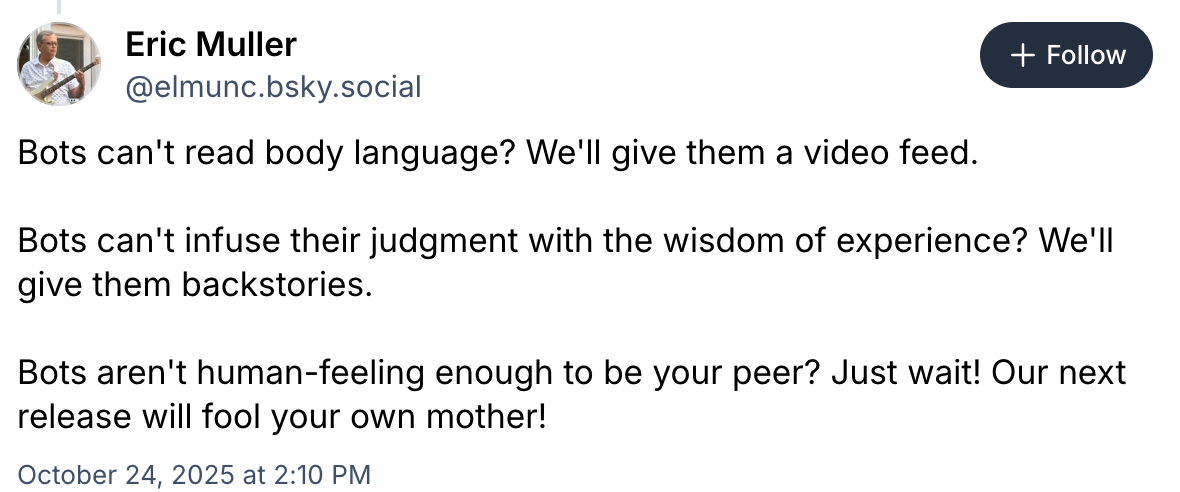

However a machine can’t inform if a witness is mendacity primarily based on their conduct, as a result of it will probably’t understand that conduct. It may solely inform if there’s an outright contradiction of a particular reality. Perhaps it may conjure up some approximation of “doubt” if a witness displays inconsistent sentence construction or one thing, and that’s superb should you suppose the distinction between an harmless man and a sociopath ought to grasp on their grasp of Strunk & White. Which, truthfully… honest. Muller’s deeper concern although, is that the tech business’s enchancment dying drive will take all the current drawbacks and patch the signs with out acknowledging the illness.

The factor with AI — except for its inherently rickety funding mannequin — is that (a) it’s superb on the duties it’s good at, and (b) virtually everybody pretends it’s good on the duties it’s not good at. Can AI change human jurors? No. Regardless of how unhealthy human jurors are, a sycophantic calculator taking part in “phrase roulette” isn’t higher.

That’s to not say there isn’t a task for AI within the jury course of. It goes with out saying that civil litigation affords a lot decrease stakes than legal circumstances and a panel of robots may present a chance to direct restricted juror sources towards the legal circumstances that matter extra. Even throughout the legal context, there could possibly be — with accountable design and regulation past what now we have proper now — a task for AI in permitting jurors to question the proof to keep away from lacking key solutions buried in pages and pages of transcripts with out the advantage of a verbatim search question. Or helping jurors in visualizing the factors of disagreement between the events.

Simply because AI isn’t ready to switch people within the field doesn’t imply it has nothing to supply although. We simply must hold experimenting… in mock trials solely.

Joe Patrice is a senior editor at Above the Legislation and co-host of Pondering Like A Lawyer. Be at liberty to e mail any ideas, questions, or feedback. Comply with him on Twitter or Bluesky should you’re fascinated with regulation, politics, and a wholesome dose of school sports activities information. Joe additionally serves as a Managing Director at RPN Government Search.

Joe Patrice is a senior editor at Above the Legislation and co-host of Pondering Like A Lawyer. Be at liberty to e mail any ideas, questions, or feedback. Comply with him on Twitter or Bluesky should you’re fascinated with regulation, politics, and a wholesome dose of school sports activities information. Joe additionally serves as a Managing Director at RPN Government Search.